Those are my notes on taking some LiDAR data and testing different commands in PDAL. If you like, you can follow along using your own data.

Most probably I’ll de adding or deleting stuff, but you can take a look to see some of the main operations that can be made on point clouds using PDAL.

This is an adaptation of the original workshop from the PDAL website, Point Cloud Processing and Analysis with PDAL, the single difference being that I use the same data for every operation and show the commands output, too, while in the workshop there are used different data sources.

The workshop can be downloaded from here, too.

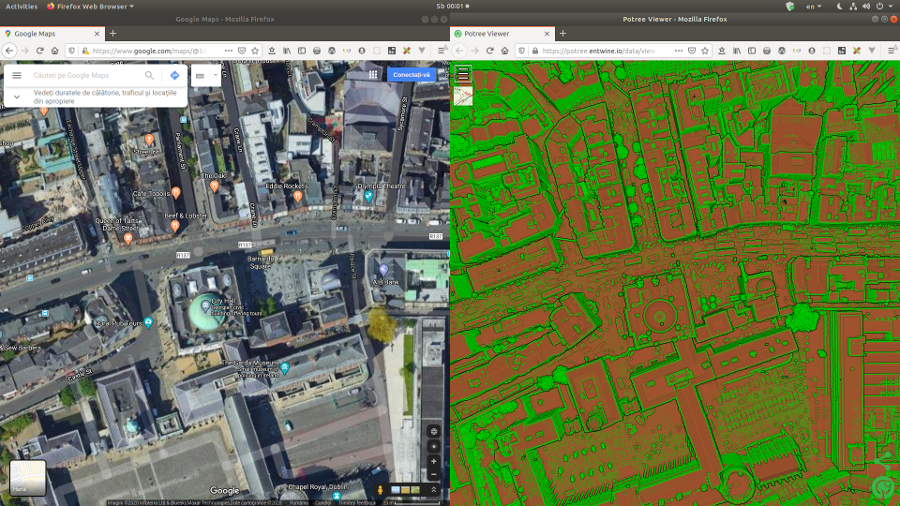

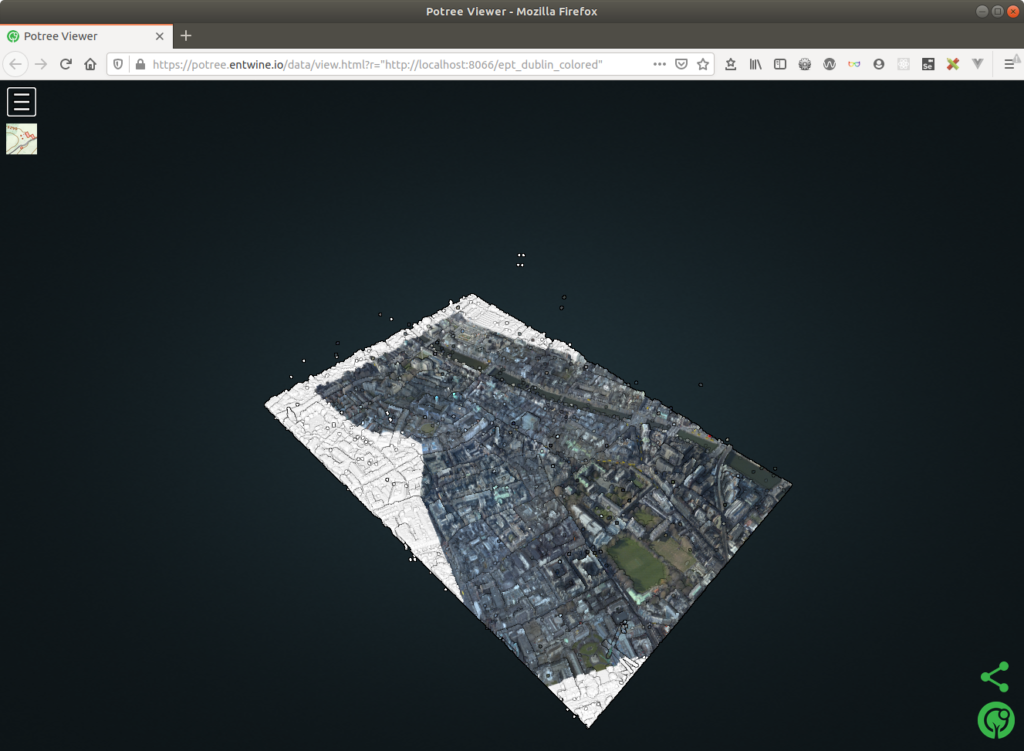

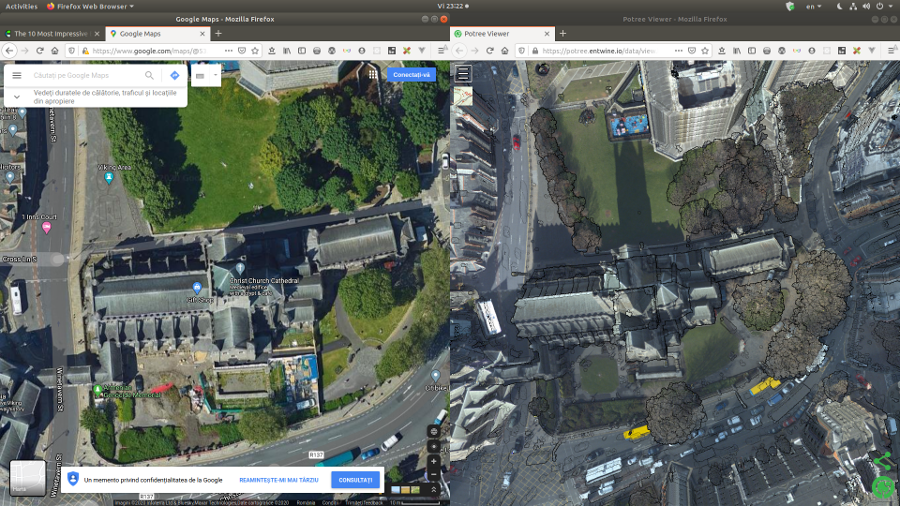

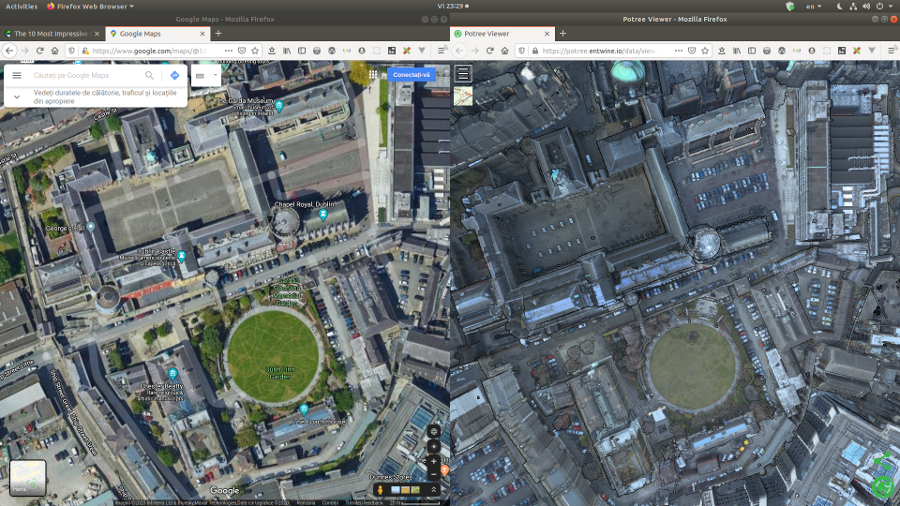

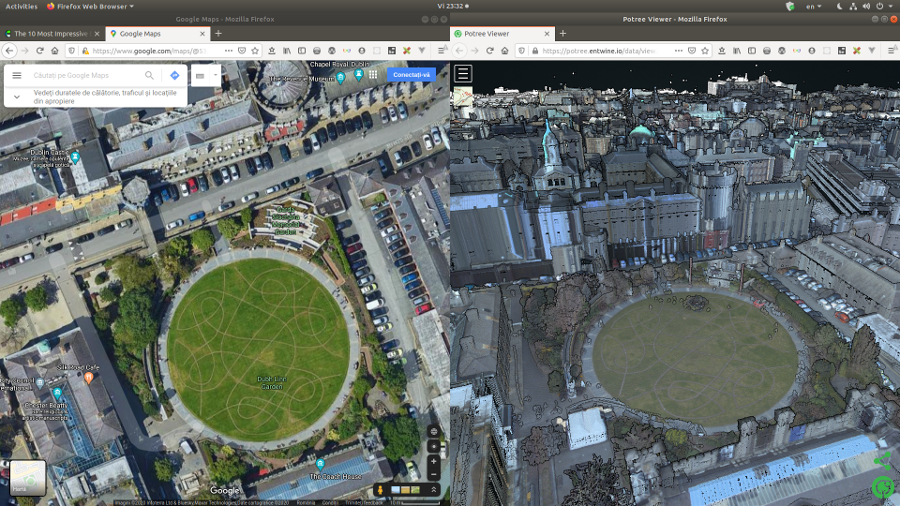

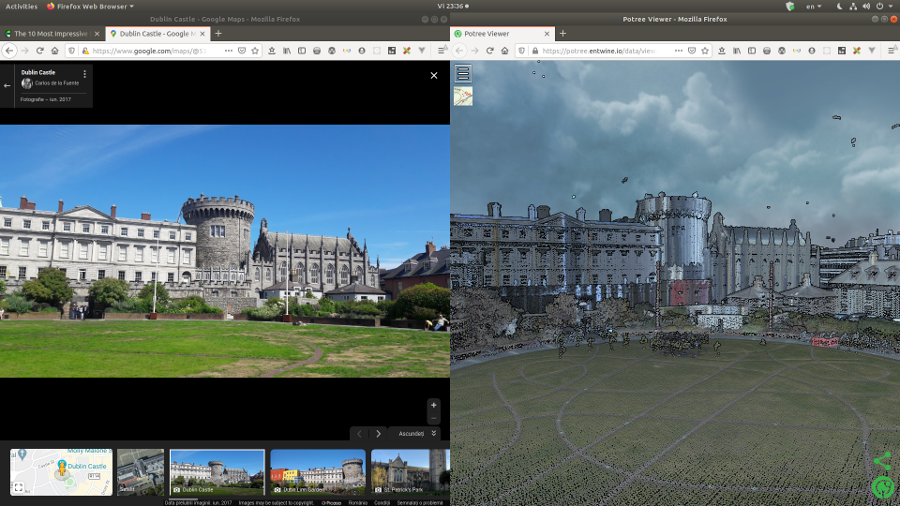

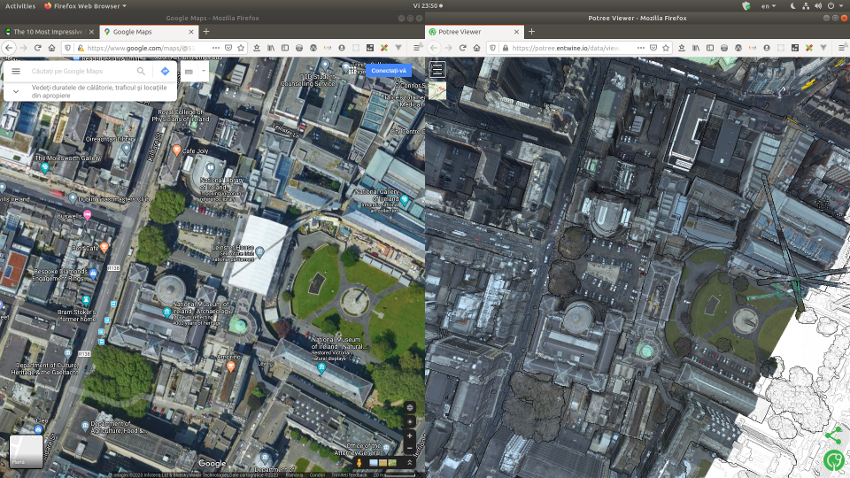

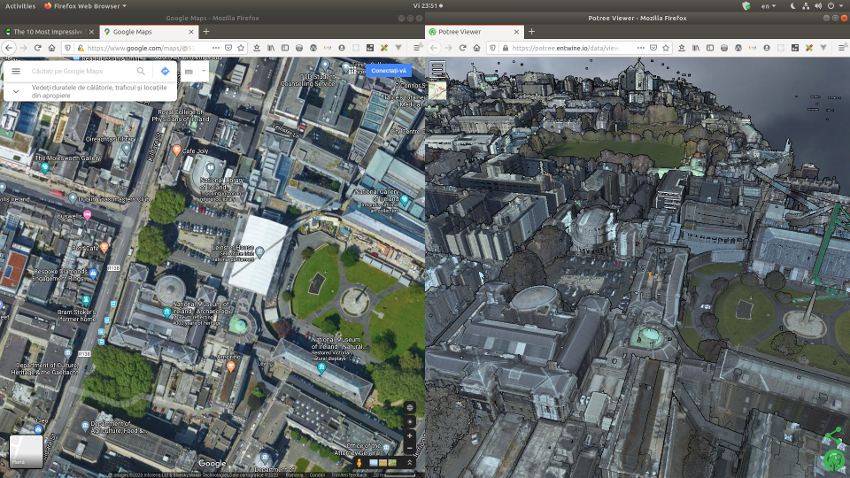

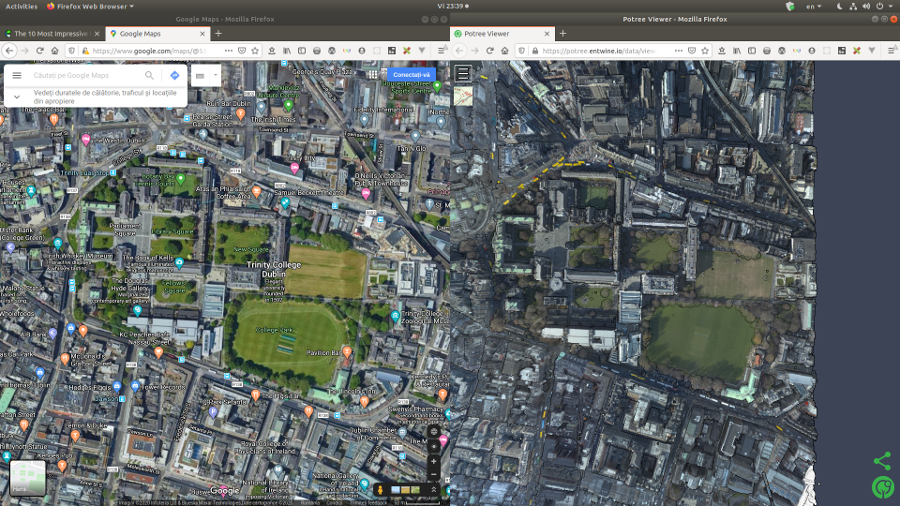

The colored point cloud data that we will be using throughout this post can be viewed here: https://potree.entwine.io/data/view.html?r=”https://maptheclouds.com/ept_dublin_colored/”.

You can use Fugro Viewer or LASTools to view LiDAR files on desktop, but only in Windows! The Linux users, although forgotten, are lucky to have Potree and Plasio that can consume Entwine Point Tile (EPT) datasets in the browser. But let’s just approach LiDAR with baby steps before digging too deep. 🙂

LiDAR refers to a remote sensing technology that emits intense, focused beams of light and measures the time it takes for the reflections to be detected by the sensor. This information is used to compute ranges, or distances, to objects. In this manner, lidar is analogous to radar (radio detecting and ranging), except that it is based on discrete pulses of laser light. The three-dimensional coordinates (e.g., x,y,z or latitude, longitude, and elevation) of the target objects are computed from 1) the time difference between the laser pulse being emitted and returned, 2) the angle at which the pulse was “fired,” and 3) the absolute location of the sensor on or above the surface of the Earth.

I won’t go into details about LiDAR, feel free to read this comprehensive guide: Lidar 101: An Introduction to Lidar Technology, Data, and Applications.

Download the data

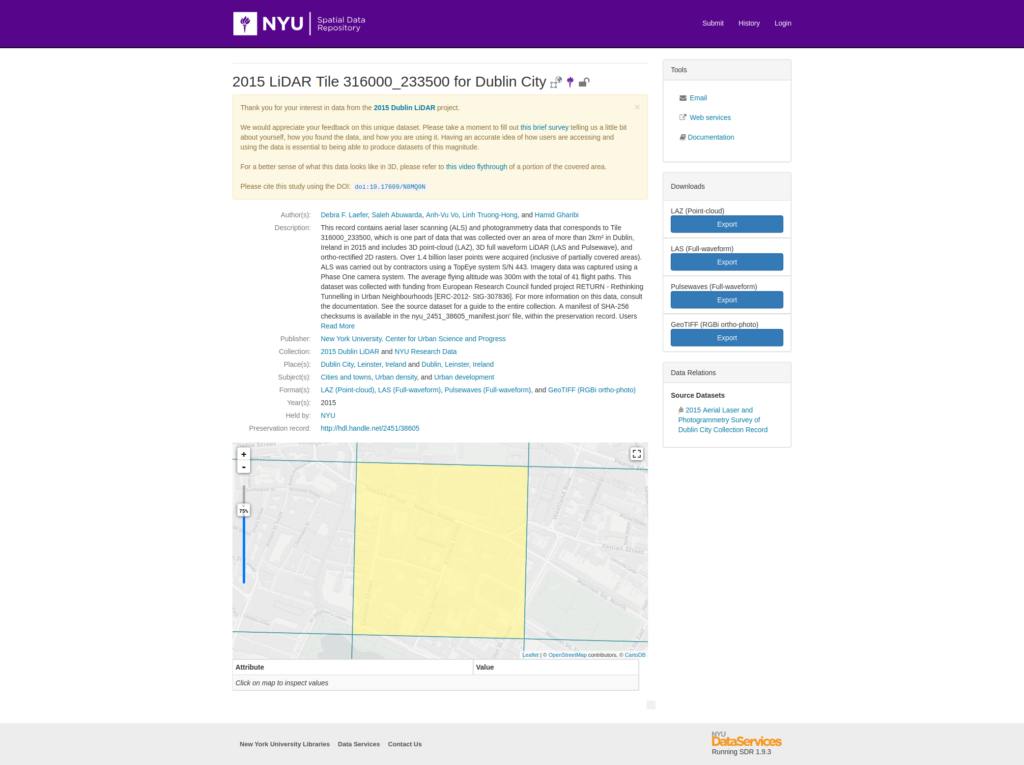

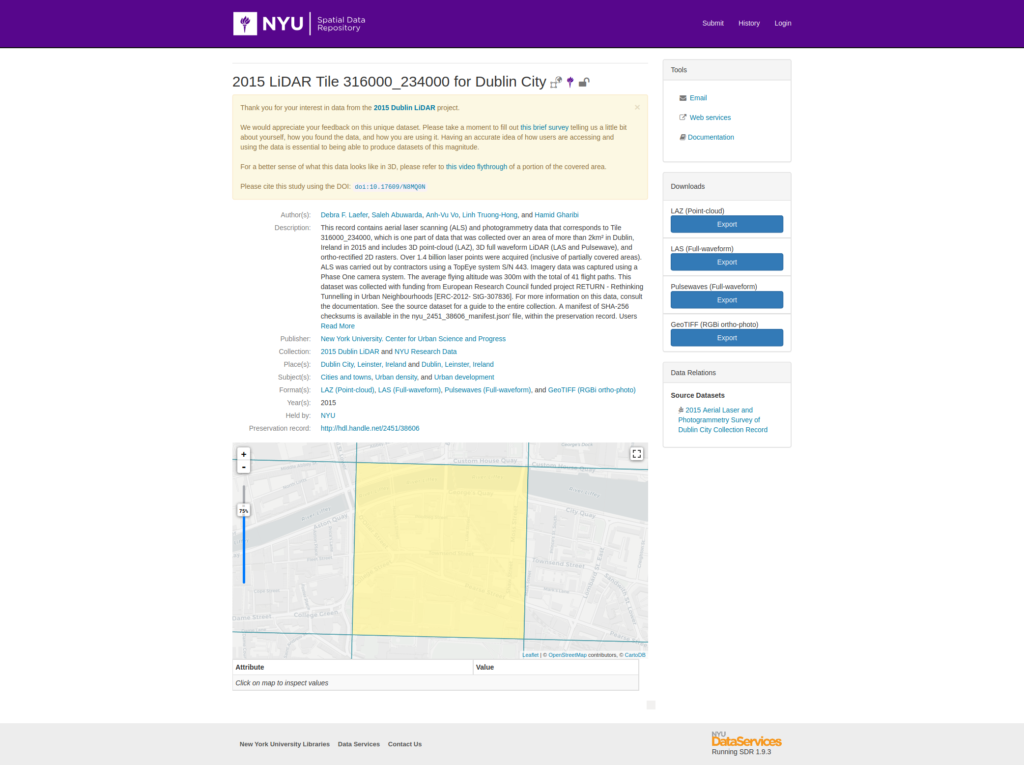

You can use your own files, but I’ll be using free data for Dublin, a part of the densest public LiDAR data set ever collected by the New York University in 2015, via its Center for Urban Science and Progress. I will download some LAZ point cloud tiles and their corresponding ortophotos from the 2015 Aerial Laser and Photogrammetry Survey of Dublin City Collection Record.

Regarding the collection: “This record serves as an index to a suite of high density, aerial remote sensing data for a 2km² area of Dublin, Ireland obtained at an average flying altitude of 300m. Collected in March 2015, the data include aerial laser scanning (ALS) from 41 flight paths in the form of a 3D point-cloud (LAZ) and 3D full waveform ALS (LAS and Pulsewave), and imagery data including ortho-rectified 2D rasters (RGBi) and oblique images. The ALS data consist of over 1.4 billion points (inclusive of partially covered areas) and were acquired by a TopEye system S/N 443. Imagery data were captured using a Phase One camera system. In this data offering, the ALS and imagery data are structured both by flight paths and by 500 × 500 m rectangular tiles.” (doi:10.17609/N8MQ0N)

The original files are:

- 2015 LiDAR Tile 315000_234000 for Dublin City. Distributed by NYU.

doi:10.17609/N8MQ0N. Accessed: 2020-04-03- https://geo.nyu.edu/catalog/nyu_2451_38595

- https://archive.nyu.edu/retrieve/79822/nyu_2451_38595_pc_T_315000_234000.zip

- https://archive.nyu.edu/retrieve/79825/nyu_2451_38595_orthophoto_T_315000_234000.zip

- https://geo.nyu.edu/catalog/nyu_2451_38595

- 2015 LiDAR Tile 315500_234000 for Dublin City. Distributed by NYU.

doi:10.17609/N8MQ0N. Accessed: 2020-04-03- https://geo.nyu.edu/catalog/nyu_2451_38601

- https://archive.nyu.edu/retrieve/79848/nyu_2451_38601_pc_T_315500_234000.zip

- https://archive.nyu.edu/retrieve/79851/nyu_2451_38601_orthophoto_T_315500_234000.zip

- https://geo.nyu.edu/catalog/nyu_2451_38601

- 2015 LiDAR Tile 315000_233500 for Dublin City. Distributed by NYU.

doi:10.17609/N8MQ0N. Accessed: 2020-04-03- https://geo.nyu.edu/catalog/nyu_2451_38594

- https://archive.nyu.edu/retrieve/79817/nyu_2451_38594_pc_T_315000_233500.zip

- https://archive.nyu.edu/retrieve/79820/nyu_2451_38594_orthophoto_T_315000_233500.zip

- https://geo.nyu.edu/catalog/nyu_2451_38594

- 2015 LiDAR Tile 315500_233500 for Dublin City. Distributed by NYU.

doi:10.17609/N8MQ0N. Accessed: 2020-04-03- https://geo.nyu.edu/catalog/nyu_2451_38600

- https://archive.nyu.edu/retrieve/79843/nyu_2451_38600_pc_T_315500_233500.zip

- https://archive.nyu.edu/retrieve/79846/nyu_2451_38600_orthophoto_T_315500_233500.zip

- https://geo.nyu.edu/catalog/nyu_2451_38600

- 2015 LiDAR Tile 316000_233500 for Dublin City. Distributed by NYU.

doi:10.17609/N8MQ0N. Accessed: 2020-04-03- https://geo.nyu.edu/catalog/nyu_2451_38600

- https://archive.nyu.edu/retrieve/79891/nyu_2451_38606_pc_T_316000_234000.zip

- https://archive.nyu.edu/retrieve/79893/nyu_2451_38606_orthophoto_T_316000_234000.zip

- https://geo.nyu.edu/catalog/nyu_2451_38600

- 2015 LiDAR Tile 316000_234000 for Dublin City. Distributed by NYU.

doi:10.17609/N8MQ0N. Accessed: 2020-04-03- https://geo.nyu.edu/catalog/nyu_2451_38606

- https://archive.nyu.edu/retrieve/79887/nyu_2451_38605_pc_T_316000_233500.zip

- https://archive.nyu.edu/retrieve/79889/nyu_2451_38605_orthophoto_T_316000_233500.zip

- https://geo.nyu.edu/catalog/nyu_2451_38606

We create a working folder, dublin and two subfolders named raw_data and imagery, then move all the downloaded point cloud data, respectively the images into it. You can download the files manually or using wget.

# go home, create subdirectory and parent directory

cd && mkdir -pv dublin/raw_data && mkdir -pv dublin/imagery

cd dublin/raw_data

wget --no-check-certificate https://archive.nyu.edu/retrieve/79822/nyu_2451_38595_pc_T_315000_234000.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79848/nyu_2451_38601_pc_T_315500_234000.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79817/nyu_2451_38594_pc_T_315000_233500.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79843/nyu_2451_38600_pc_T_315500_233500.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79887/nyu_2451_38605_pc_T_316000_233500.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79891/nyu_2451_38606_pc_T_316000_234000.zip

cd ../imagery

wget --no-check-certificate https://archive.nyu.edu/retrieve/79825/nyu_2451_38595_orthophoto_T_315000_234000.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79851/nyu_2451_38601_orthophoto_T_315500_234000.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79820/nyu_2451_38594_orthophoto_T_315000_233500.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79846/nyu_2451_38600_orthophoto_T_315500_233500.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79893/nyu_2451_38606_orthophoto_T_316000_234000.zip

wget --no-check-certificate https://archive.nyu.edu/retrieve/79889/nyu_2451_38605_orthophoto_T_316000_233500.zip[...]

wget --no-check-certificate https://archive.nyu.edu/retrieve/79848/nyu_2451_38601_pc_T_315500_234000.zip

--2020-03-04 18:24:22-- https://archive.nyu.edu/retrieve/79848/nyu_2451_38601_pc_T_315500_234000.zip

Resolving archive.nyu.edu (archive.nyu.edu)... 128.122.108.142

Connecting to archive.nyu.edu (archive.nyu.edu)|128.122.108.142|:443... connected.

WARNING: cannot verify archive.nyu.edu's certificate, issued by ‘CN=InCommon RSA Server CA,OU=InCommon,O=Internet2,L=Ann Arbor,ST=MI,C=US’:

Unable to locally verify the issuer's authority.

HTTP request sent, awaiting response... 200 OK

Length: 410650750 (392M) [application/octet-stream]

Saving to: ‘nyu_2451_38601_pc_T_315500_234000.zip’

nyu_2451_38601_pc_T_315500_234 100%[==================================================>] 391,63M 8,62MB/s in 34s

2020-03-04 18:24:56 (11,5 MB/s) - ‘nyu_2451_38601_pc_T_315500_234000.zip’ saved [410650750/410650750]

[...]raw_data/

.

├── imagery

│ ├── nyu_2451_38594_orthophoto_T_315000_233500.zip

│ ├── nyu_2451_38595_orthophoto_T_315000_234000.zip

│ ├── nyu_2451_38600_orthophoto_T_315500_233500.zip

│ ├── nyu_2451_38600_orthophoto_T_315500_234000.zip

│ ├── nyu_2451_38600_orthophoto_T_316000_233500.zip

│ └── nyu_2451_38601_orthophoto_T_316000_234000.zip

└── raw_data

├── nyu_2451_38594_pc_T_315000_233500.zip

├── nyu_2451_38595_pc_T_315000_234000.zip

├── nyu_2451_38600_pc_T_315500_233500.zip

├── nyu_2451_38601_pc_T_315500_234000.zip

├── nyu_2451_38600_pc_T_316000_233500.zip

└── nyu_2451_38601_pc_T_316000_234000.zipThen uzip the files and remove the original zip in order to preserve space.

cd raw_data

unzip \*.zip

rm *.zip

cd ../imagery

unzip \*.zip

rm *.zip

[...]

myuser@comp:~/dublin/imagery$ unzip \*.zip

Archive: nyu_2451_38595_orthophoto_T_315000_234000.zip

creating: nyu_2451_38595_rgbi/

inflating: nyu_2451_38595_rgbi/315000_234000.tfw

inflating: nyu_2451_38595_rgbi/315000_234000.tif

[...]

6 archives were successfully processed.

myuser@comp:~/dublin/imagery$ rm *.zip

myuser@comp:~/dublin/imagery$myuser@comp:~/dublin$ tree -L 3

.

├── imagery

│ ├── nyu_2451_38594_rgbi

│ │ ├── 315000_233500.tfw

│ │ └── 315000_233500.tif

│ ├── [...]

└── raw_data

├── nyu_2451_38594_laz

│ └── T_315000_233500.laz

├── [...]Now get the LAZ files outside their folders. We will leave the images alone for now.

cd raw_data/

mv */*.laz .

rm -rf nyu*_lazmyuser@comp:~/dublin/raw_data$ tree

.

├── T_315000_233500.laz

├── T_315000_234000.laz

├── T_315500_233500.laz

├── T_315500_234000.laz

├── T_316000_233500.laz

└── T_316000_234000.laz

0 directories, 6 files

myuser@comp:~/dublin/raw_data$Install PDAL

You can read this other post to find out how to install PDAL. Every time you want to access the environment where PDAL was installed, useconda activate followed by the environment name, in our case new_clouds.

myuser@comp:~/dublin$ conda activate new_clouds

(new_clouds) myuser@comp:~/dublin$I will refer to Linux, though the commands for Windows will be mentioned, too. NOTE: PDAL commands, except batch processing, are the same in Windows and Linux, but the line separator differs: ^ in Windows and \ in Linux.

Play

Printing the first point (src)

# List the LAZ files

cd ~/dublin

ls -lah raw_data

# Query the las data using pdal info

pdal info raw_data/T_315000_233500.laz -p 0

pdal info raw_data/T_315000_234000.laz -p 0(new_clouds) myuser@comp:~/dublin$ pdal info raw_data/T_315000_233500.laz -p 0

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

{

"filename": "raw_data/T_315000_233500.laz",

"pdal_version": "2.0.1 (git-version: 6600e6)",

"points":

{

"point":

{

"Classification": 4,

"EdgeOfFlightLine": 0,

"GpsTime": 388567.0446,

"Intensity": 8,

"NumberOfReturns": 2,

"PointId": 0,

"PointSourceId": 0,

"ReturnNumber": 2,

"ScanAngleRank": 30,

"ScanDirectionFlag": 1,

"UserData": 0,

"X": 315459.722,

"Y": 233580.778,

"Z": -61.811

}

}

}

(new_clouds) myuser@comp:~/dublin$ pdal info raw_data/T_315000_234000.laz -p 0

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

{

"filename": "raw_data/T_315000_234000.laz",

"pdal_version": "2.0.1 (git-version: 6600e6)",

"points":

{

"point":

{

"Classification": 2,

"EdgeOfFlightLine": 0,

"GpsTime": 388615.3997,

"Intensity": 8,

"NumberOfReturns": 2,

"PointId": 0,

"PointSourceId": 0,

"ReturnNumber": 2,

"ScanAngleRank": 34,

"ScanDirectionFlag": 1,

"UserData": 0,

"X": 315000.379,

"Y": 234036.834,

"Z": 5.039

}

}

}

(new_clouds) myuser@comp:~/dublin$Printing file metadata (src)

pdal info raw_data/T_315000_233500.laz --metadata(new_clouds) myuser@comp:~/dublin$ pdal info raw_data/T_315000_233500.laz --metadata

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

{

"filename": "raw_data/T_315000_233500.laz",

"metadata":

{

"comp_spatialreference": "COMPD_CS[\"DAT1965, AiryMod1849, TM65 / Irish Grid\",PROJCS[\"TM65 / Irish Grid\",GEOGCS[\"DAT1965, AiryMod1849\",DATUM[\"unnamed\",SPHEROID[\"unnamed\",6377340.189,299.324964599999]],PRIMEM[\"Greenwich\",0],UNIT[\"degree\",0.0174532925199433,AUTHORITY[\"EPSG\",\"9122\"]]],PROJECTION[\"Transverse_Mercator\"],PARAMETER[\"latitude_of_origin\",53.5],PARAMETER[\"central_meridian\",-8],PARAMETER[\"scale_factor\",1.000035],PARAMETER[\"false_easting\",200000.32],PARAMETER[\"false_northing\",250000.08],UNIT[\"metre\",1,AUTHORITY[\"EPSG\",\"9001\"]],AXIS[\"Easting\",EAST],AXIS[\"Northing\",NORTH]],VERT_CS[\"unknown\",VERT_DATUM[\"unknown\",2005],UNIT[\"metre\",1,AUTHORITY[\"EPSG\",\"9001\"]],AXIS[\"Up\",UP]]]",

"compressed": true,

"count": 89824621,

"creation_doy": 237,

"creation_year": 2015,

"dataformat_id": 1,

"dataoffset": 840,

"filesource_id": 0,

"global_encoding": 0,

"global_encoding_base64": "AAA=",

"header_size": 227,

"major_version": 1,

"maxx": 315499.999,

"maxy": 233999.999,

"maxz": 361.183,

"minor_version": 2,

"minx": 315000,

"miny": 233500,

"minz": -93.254,

"offset_x": 314000,

"offset_y": 230000,

"offset_z": 0,

"point_length": 28,

"project_id": "76B01A0C-C5F9-2A4A-8E10-D3CBC357250B",

"scale_x": 0.001,

"scale_y": 0.001,

"scale_z": 0.001,

"software_id": "LAStools, FME Desktop, UMGPC",

"spatialreference": "COMPD_CS[\"DAT1965, AiryMod1849, TM65 / Irish Grid\",PROJCS[\"TM65 / Irish Grid\",GEOGCS[\"DAT1965, AiryMod1849\",DATUM[\"unnamed\",SPHEROID[\"unnamed\",6377340.189,299.324964599999]],PRIMEM[\"Greenwich\",0],UNIT[\"degree\",0.0174532925199433,AUTHORITY[\"EPSG\",\"9122\"]]],PROJECTION[\"Transverse_Mercator\"],PARAMETER[\"latitude_of_origin\",53.5],PARAMETER[\"central_meridian\",-8],PARAMETER[\"scale_factor\",1.000035],PARAMETER[\"false_easting\",200000.32],PARAMETER[\"false_northing\",250000.08],UNIT[\"metre\",1,AUTHORITY[\"EPSG\",\"9001\"]],AXIS[\"Easting\",EAST],AXIS[\"Northing\",NORTH]],VERT_CS[\"unknown\",VERT_DATUM[\"unknown\",2005],UNIT[\"metre\",1,AUTHORITY[\"EPSG\",\"9001\"]],AXIS[\"Up\",UP]]]",

"srs":

{

"compoundwkt": "COMPD_CS[\"DAT1965, AiryMod1849, TM65 / Irish Grid\",PROJCS[\"TM65 / Irish Grid\",GEOGCS[\"DAT1965, AiryMod1849\",DATUM[\"unnamed\",SPHEROID[\"unnamed\",6377340.189,299.324964599999]],PRIMEM[\"Greenwich\",0],UNIT[\"degree\",0.0174532925199433,AUTHORITY[\"EPSG\",\"9122\"]]],PROJECTION[\"Transverse_Mercator\"],PARAMETER[\"latitude_of_origin\",53.5],PARAMETER[\"central_meridian\",-8],PARAMETER[\"scale_factor\",1.000035],PARAMETER[\"false_easting\",200000.32],PARAMETER[\"false_northing\",250000.08],UNIT[\"metre\",1,AUTHORITY[\"EPSG\",\"9001\"]],AXIS[\"Easting\",EAST],AXIS[\"Northing\",NORTH]],VERT_CS[\"unknown\",VERT_DATUM[\"unknown\",2005],UNIT[\"metre\",1,AUTHORITY[\"EPSG\",\"9001\"]],AXIS[\"Up\",UP]]]",

"horizontal": "PROJCS[\"TM65 / Irish Grid\",GEOGCS[\"DAT1965, AiryMod1849\",DATUM[\"unnamed\",SPHEROID[\"unnamed\",6377340.189,299.324964599999]],PRIMEM[\"Greenwich\",0],UNIT[\"degree\",0.0174532925199433,AUTHORITY[\"EPSG\",\"9122\"]]],PROJECTION[\"Transverse_Mercator\"],PARAMETER[\"latitude_of_origin\",53.5],PARAMETER[\"central_meridian\",-8],PARAMETER[\"scale_factor\",1.000035],PARAMETER[\"false_easting\",200000.32],PARAMETER[\"false_northing\",250000.08],UNIT[\"metre\",1,AUTHORITY[\"EPSG\",\"9001\"]],AXIS[\"Easting\",EAST],AXIS[\"Northing\",NORTH]]",

"isgeocentric": false,

"isgeographic": false,

"prettycompoundwkt": "COMPD_CS[\"DAT1965, AiryMod1849, TM65 / Irish Grid\",\n PROJCS[\"TM65 / Irish Grid\",\n GEOGCS[\"DAT1965, AiryMod1849\",\n DATUM[\"unnamed\",\n SPHEROID[\"unnamed\",6377340.189,299.324964599999]],\n PRIMEM[\"Greenwich\",0],\n UNIT[\"degree\",0.0174532925199433,\n AUTHORITY[\"EPSG\",\"9122\"]]],\n PROJECTION[\"Transverse_Mercator\"],\n PARAMETER[\"latitude_of_origin\",53.5],\n PARAMETER[\"central_meridian\",-8],\n PARAMETER[\"scale_factor\",1.000035],\n PARAMETER[\"false_easting\",200000.32],\n PARAMETER[\"false_northing\",250000.08],\n UNIT[\"metre\",1,\n AUTHORITY[\"EPSG\",\"9001\"]],\n AXIS[\"Easting\",EAST],\n AXIS[\"Northing\",NORTH]],\n VERT_CS[\"unknown\",\n VERT_DATUM[\"unknown\",2005],\n UNIT[\"metre\",1,\n AUTHORITY[\"EPSG\",\"9001\"]],\n AXIS[\"Up\",UP]]]",

"prettywkt": "PROJCS[\"TM65 / Irish Grid\",\n GEOGCS[\"DAT1965, AiryMod1849\",\n DATUM[\"unnamed\",\n SPHEROID[\"unnamed\",6377340.189,299.324964599999]],\n PRIMEM[\"Greenwich\",0],\n UNIT[\"degree\",0.0174532925199433,\n AUTHORITY[\"EPSG\",\"9122\"]]],\n PROJECTION[\"Transverse_Mercator\"],\n PARAMETER[\"latitude_of_origin\",53.5],\n PARAMETER[\"central_meridian\",-8],\n PARAMETER[\"scale_factor\",1.000035],\n PARAMETER[\"false_easting\",200000.32],\n PARAMETER[\"false_northing\",250000.08],\n UNIT[\"metre\",1,\n AUTHORITY[\"EPSG\",\"9001\"]],\n AXIS[\"Easting\",EAST],\n AXIS[\"Northing\",NORTH]]",

"proj4": "+proj=tmerc +lat_0=53.5 +lon_0=-8 +k=1.000035 +x_0=200000.32 +y_0=250000.08 +a=6377340.189 +rf=299.324964599999 +units=m +vunits=m +no_defs",

"units":

{

"horizontal": "metre",

"vertical": "metre"

},

"vertical": "VERT_CS[\"unknown\",VERT_DATUM[\"unknown\",2005],UNIT[\"metre\",1,AUTHORITY[\"EPSG\",\"9001\"]],AXIS[\"Up\",UP]]",

"wkt": "PROJCS[\"TM65 / Irish Grid\",GEOGCS[\"DAT1965, AiryMod1849\",DATUM[\"unnamed\",SPHEROID[\"unnamed\",6377340.189,299.324964599999]],PRIMEM[\"Greenwich\",0],UNIT[\"degree\",0.0174532925199433,AUTHORITY[\"EPSG\",\"9122\"]]],PROJECTION[\"Transverse_Mercator\"],PARAMETER[\"latitude_of_origin\",53.5],PARAMETER[\"central_meridian\",-8],PARAMETER[\"scale_factor\",1.000035],PARAMETER[\"false_easting\",200000.32],PARAMETER[\"false_northing\",250000.08],UNIT[\"metre\",1,AUTHORITY[\"EPSG\",\"9001\"]],AXIS[\"Easting\",EAST],AXIS[\"Northing\",NORTH]]"

},

"system_id": "RiPROCESS 1.6.5",

"vlr_0":

{

"data": "AQABAAAAGQAABAAAAQABAAEEAAABAAIAAgSxhygAAAAACAAAAQD/fwEIsYcVADoAAggAAAEA/38DCAAAAQD/fwQIAAABACkjBggAAAEAjiMICAAAAQD/fwkIsIcBAAYACwiwhwEABwAMCAAAAQCOIw0IsIcBAAUAAAwAAAEA/38BDLGHEgAoAAIMAAABAP9/AwwAAAEAAQAEDAAAAQApIwgMsIcBAAEACQywhwEAAAAKDLCHAQACAAsMsIcBAAMAFAywhwEABAADEAAAAQApIw==",

"description": "GeoKeyDirectoryTag (mandatory)",

"record_id": 34735,

"user_id": "LASF_Projection"

},

"vlr_1":

{

"data": "AAAAAADASkAAAAAAAAAgwPYoXI8CaghBPQrXo4CEDkGOrz2zJADwPwAAAAAAAAAAdZMYDN9TWEG5lRQOM7VyQA==",

"description": "GeoDoubleParamsTag (optional)",

"record_id": 34736,

"user_id": "LASF_Projection"

},

"vlr_2":

{

"data": "REFUMTk2NSwgQWlyeU1vZDE4NDksIFRNNjUgLyBJcmlzaCBHcmlkfFRNNjUgLyBJcmlzaCBHcmlkfERBVDE5NjUsIEFpcnlNb2QxODQ5fA==",

"description": "GeoASCIIParamsTag (optional)",

"record_id": 34737,

"user_id": "LASF_Projection"

},

"vlr_3":

{

"data": "AgAAAAIFAgAAAAAAUMMAAP////////////////////8CAAYAFAACAAcACAACAA==",

"description": "by laszip of LAStools (170327)",

"record_id": 22204,

"user_id": "laszip encoded"

}

},

"pdal_version": "2.0.1 (git-version: 6600e6)"

}

(new_clouds) myuser@comp:~/dublin$Install jq with sudo apt install jq (on Windows from https://stedolan.github.io/jq), then filter the JSON query result using jq.

pdal info raw_data/T_315000_233500.laz --metadata | jq ".metadata.maxx, .metadata.count"(new_clouds) myuser@comp:~/dublin$ pdal info raw_data/T_315000_233500.laz --metadata | jq ".metadata.maxx, .metadata.count"

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

315499.999

89824621

(new_clouds) myuser@comp:~/dublin$Find the midpoint of the bounding cube (src)

(new_clouds) myuser@comp:~/dublin$ pdal info raw_data/T_315000_233500.laz --all | jq .stats.bbox.native.bbox

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

{

"maxx": 315499.999,

"maxy": 233999.999,

"maxz": 361.183,

"minx": 315000,

"miny": 233500,

"minz": -93.254

}

(new_clouds) myuser@comp:~/dublin$Calculate the average the X, Y, and Z values for this tile: min + (max – min)/2

- x = 315000 + (315499.999 – 315000)/2 = 315250

- y = 233500 + (233999.999 – 233500)/2 = 233750

- z = -93.254 + (361.183 – (-93.254))/2 = 133.96

(new_clouds) myuser@comp:~/dublin$ python

Python 3.8.1 | packaged by conda-forge | (default, Jan 29 2020, 14:55:04)

[GCC 7.3.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> round(315000 + (315499.999 - 315000)/2, 2)

315250.0

>>> round(233500 + (233999.999 - 233500)/2, 2)

233750.0

>>> round(-93.254 + (361.183 - (-93.254))/2, 2)

133.96

>>> exit()

(new_clouds) myuser@comp:~/dublin$Now we can return the three nearest points from the calculated center (Note that we use /3).

pdal info raw_data/T_315000_233500.laz --query "315250, 233750, 133.96/3"(new_clouds) myuser@comp:~/dublin$ pdal info raw_data/T_315000_233500.laz --query "315250, 233750, 133.96/3"

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

{

"filename": "raw_data/T_315000_233500.laz",

"pdal_version": "2.0.1 (git-version: 6600e6)",

"points":

{

"point":

[

{

"Classification": 4,

"EdgeOfFlightLine": 1,

"GpsTime": 402661.6853,

"Intensity": 14,

"NumberOfReturns": 1,

"PointId": 85108862,

"PointSourceId": 32,

"ReturnNumber": 1,

"ScanAngleRank": -32,

"ScanDirectionFlag": 1,

"UserData": 0,

"X": 315310.863,

"Y": 233568.3,

"Z": 361.183

},

{

"Classification": 4,

"EdgeOfFlightLine": 1,

"GpsTime": 389417.1273,

"Intensity": 7,

"NumberOfReturns": 1,

"PointId": 38157744,

"PointSourceId": 3,

"ReturnNumber": 1,

"ScanAngleRank": -31,

"ScanDirectionFlag": 1,

"UserData": 0,

"X": 315273.516,

"Y": 233870.654,

"Z": 358.511

},

{

"Classification": 4,

"EdgeOfFlightLine": 0,

"GpsTime": 400413.5956,

"Intensity": 90,

"NumberOfReturns": 2,

"PointId": 78937499,

"PointSourceId": 30,

"ReturnNumber": 1,

"ScanAngleRank": -33,

"ScanDirectionFlag": 1,

"UserData": 0,

"X": 315301.532,

"Y": 233689.65,

"Z": 355.9

}

]

}

}

(new_clouds) myuser@comp:~/dublin$Compression and Decompression (src)

Create a new folder named temp_data, decompress the file from LAZ to LAS using pdal translate, then compare their size. Note that it would take some time to complete the transformation, if the files were large, but after the compression the storage decreases significantly.

mkdir temp_data && pdal translate raw_data/T_315000_233500.laz temp_data/T_315000_233500.las

ls -lah raw_data/T_315000_233500.laz

ls -lah temp_data/T_315000_233500.las(new_clouds) myuser@comp:~/dublin$ ls -lah raw_data/T_315000_233500.laz

-rw-r--r-- 1 myuser myuser 431M apr 24 2017 raw_data/T_315000_233500.laz

(new_clouds) myuser@comp:~/dublin$ ls -lah temp_data/T_315000_233500.las

-rw-r--r-- 1 myuser myuser 2,9G mar 4 21:41 temp_data/T_315000_233500.las

(new_clouds) myuser@comp:~/dublin$From now on we will use the compressed files in our operations.

Reprojection (src)

Reproject file using pdal translate to “EPSG:4326” for testing purposes. I have first looked at metadata to find the original coordinate system, in spatialreference, looked after TM65 / Irish Gridand found out it was EPSG:29902.

pdal translate raw_data/T_315000_233500.laz \

temp_data/T_315000_233500_wgs84.laz reprojection \

--filters.reprojection.in_srs="EPSG:29902" \

--filters.reprojection.out_srs="EPSG:4326"

pdal info temp_data/T_315000_233500_wgs84.laz --all | jq .stats.bbox.native.bbox

pdal info temp_data/T_315000_233500_wgs84.laz -p 0(new_clouds) myuser@comp:~/dublin$ pdal translate raw_data/T_315000_233500.laz \

> temp_data/T_315000_233500_wgs84.laz reprojection \

> --filters.reprojection.in_srs="EPSG:29902" \

> --filters.reprojection.out_srs="EPSG:4326"

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

(new_clouds) myuser@comp:~/dublin$ pdal info temp_data/T_315000_233500_wgs84.laz --all | jq .stats.bbox.native.bbox

{

"maxx": -6.27,

"maxy": 53.34,

"maxz": 361.18,

"minx": -6.27,

"miny": 53.34,

"minz": -93.25

}

(new_clouds) myuser@comp:~/dublin$ pdal info temp_data/T_315000_233500_wgs84.laz -p 0

{

"filename": "temp_data/T_315000_233500_wgs84.laz",

"pdal_version": "2.0.1 (git-version: 6600e6)",

"points":

{

"point":

{

"Blue": 0,

"Classification": 4,

"EdgeOfFlightLine": 0,

"GpsTime": 388567.0446,

"Green": 0,

"Intensity": 8,

"NumberOfReturns": 2,

"PointId": 0,

"PointSourceId": 0,

"Red": 0,

"ReturnNumber": 2,

"ScanAngleRank": 30,

"ScanDirectionFlag": 1,

"UserData": 0,

"X": -6.27,

"Y": 53.34,

"Z": -61.81

}

}

}

(new_clouds) myuser@comp:~/dublin$For latitude/longitude data, you will need to set the scale to smaller values like 0.0000001. Repeat the reproject command setting the scale, too.

pdal translate raw_data/T_315000_233500.laz \

temp_data/T_315000_233500_wgs84.laz reprojection \

--filters.reprojection.in_srs="EPSG:29902" \

--filters.reprojection.out_srs="EPSG:4326" \

--writers.las.scale_x=0.0000001 \

--writers.las.scale_y=0.0000001 \

--writers.las.offset_x="auto" \

--writers.las.offset_y="auto"

pdal info temp_data/T_315000_233500_wgs84.laz --all | jq .stats.bbox.native.bbox

pdal info temp_data/T_315000_233500_wgs84.laz -p 0(new_clouds) myuser@comp:~/dublin$ pdal translate raw_data/T_315000_233500.laz \

> temp_data/T_315000_233500_wgs84.laz reprojection \

> --filters.reprojection.in_srs="EPSG:29902" \

> --filters.reprojection.out_srs="EPSG:4326" \

> --writers.las.scale_x=0.0000001 \

> --writers.las.scale_y=0.0000001 \

> --writers.las.offset_x="auto" \

> --writers.las.offset_y="auto"

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

(pdal translate writers.las Warning) Auto offset for Xrequested in stream mode. Using value of -6.26731.

(pdal translate writers.las Warning) Auto offset for Yrequested in stream mode. Using value of 53.3401.

(new_clouds) myuser@comp:~/dublin$ pdal info temp_data/T_315000_233500_wgs84.laz --all | jq .stats.bbox.native.bbox

{

"maxx": -6.266556934,

"maxy": 53.34396684,

"maxz": 361.18,

"minx": -6.274241434,

"miny": 53.33936784,

"minz": -93.25

}

(new_clouds) myuser@comp:~/dublin$ pdal info temp_data/T_315000_233500_wgs84.laz -p 0

{

"filename": "temp_data/T_315000_233500_wgs84.laz",

"pdal_version": "2.0.1 (git-version: 6600e6)",

"points":

{

"point":

{

"Blue": 0,

"Classification": 4,

"EdgeOfFlightLine": 0,

"GpsTime": 388567.0446,

"Green": 0,

"Intensity": 8,

"NumberOfReturns": 2,

"PointId": 0,

"PointSourceId": 0,

"Red": 0,

"ReturnNumber": 2,

"ScanAngleRank": 30,

"ScanDirectionFlag": 1,

"UserData": 0,

"X": -6.267313934,

"Y": 53.34010194,

"Z": -61.81

}

}

}

(new_clouds) myuser@comp:~/dublin$Colorizing points with imagery (src)

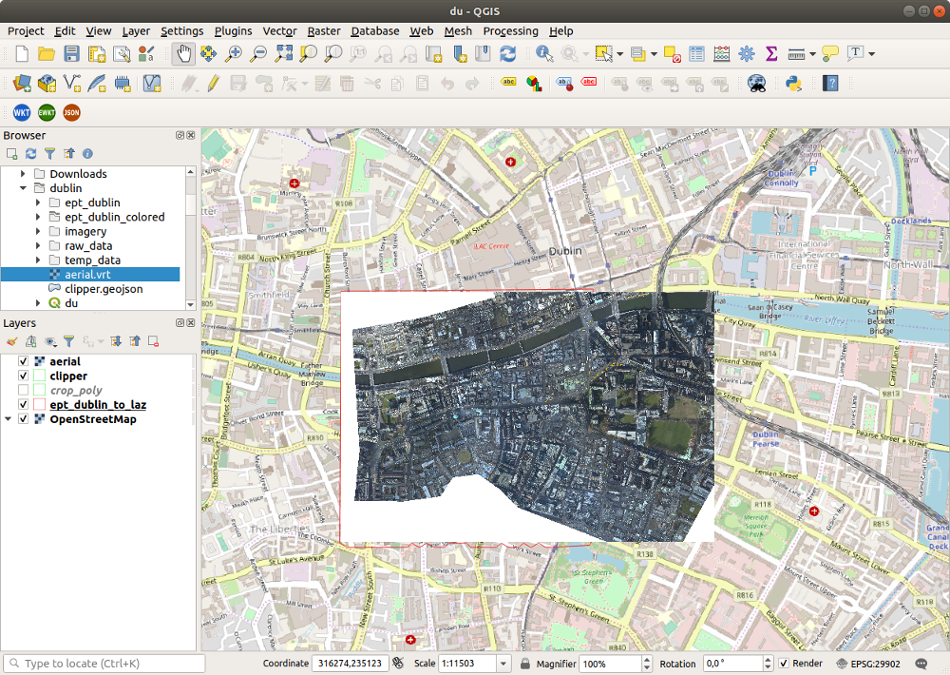

I have used the companion free aerial data downloaded from geo.nyu.edu, merged in a vrt file to color all files from the raw_data folder, then merged them.

cd imagery/

mv */*.* .

rm -rf nyu_*

tree

cd ..

gdalbuildvrt ./aerial.vrt ./imagery/*.tif(new_clouds) myuser@comp:~/dublin/imagery$ tree

.

├── 315000_233500.tfw

├── 315000_233500.tif

├── 315000_234000.tfw

├── 315000_234000.tif

├── 315500_233500.tfw

├── 315500_233500.tif

├── 315500_234000.tfw

└── 315500_234000.tif

0 directories, 8 files

(new_clouds) myuser@comp:~/dublin/imagery$ cd ..

(new_clouds) myuser@comp:~/dublin$ gdalbuildvrt ./aerial.vrt ./imagery/*.tif

0...10...20..proj_create_from_database: datum not found

proj_create_from_database: prime meridian not found

.30...40...50proj_create_from_database: datum not found

proj_create_from_database: prime meridian not found

...60...70..proj_create_from_database: datum not found

proj_create_from_database: prime meridian not found

.80...90...100 - done.

proj_create_from_database: datum not found

proj_create_from_database: prime meridian not found

(new_clouds) myuser@comp:~/dublin$

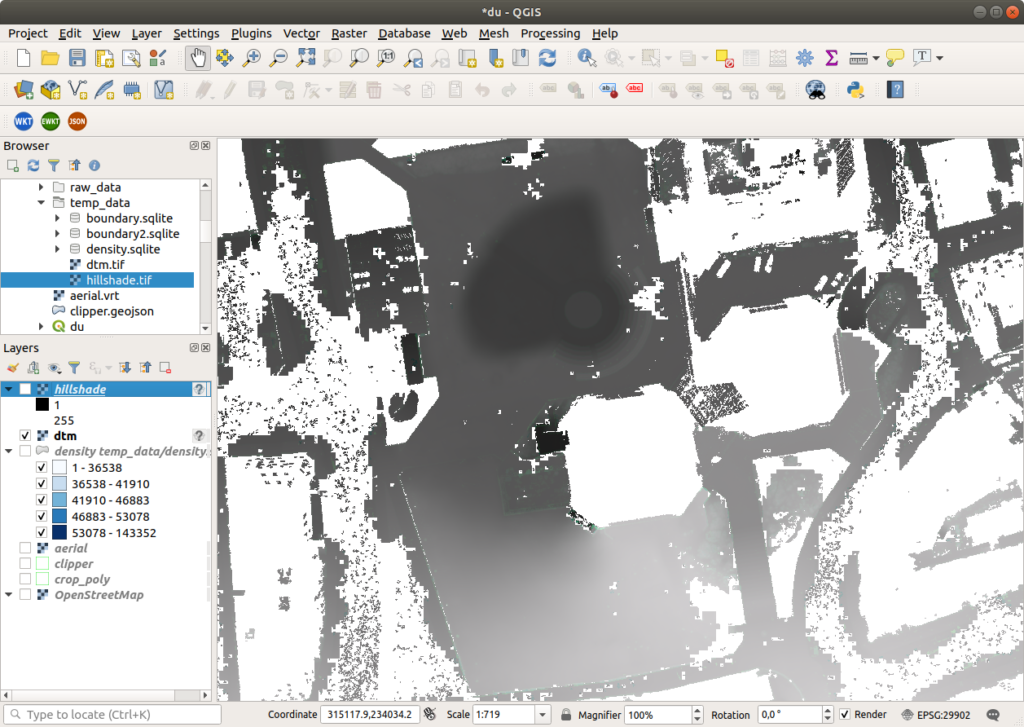

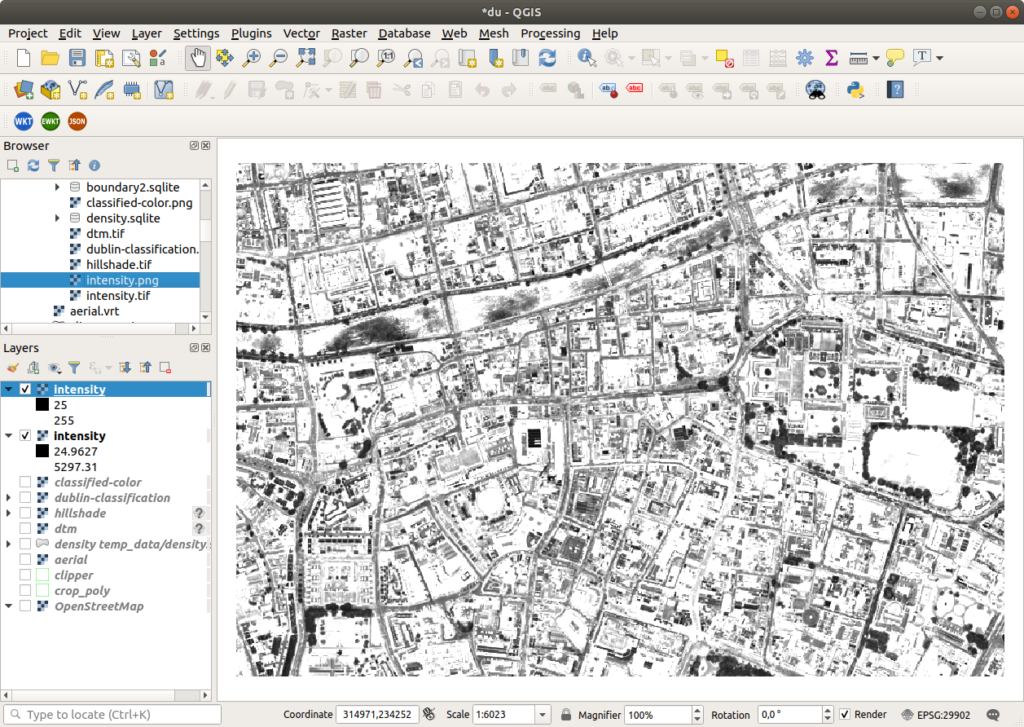

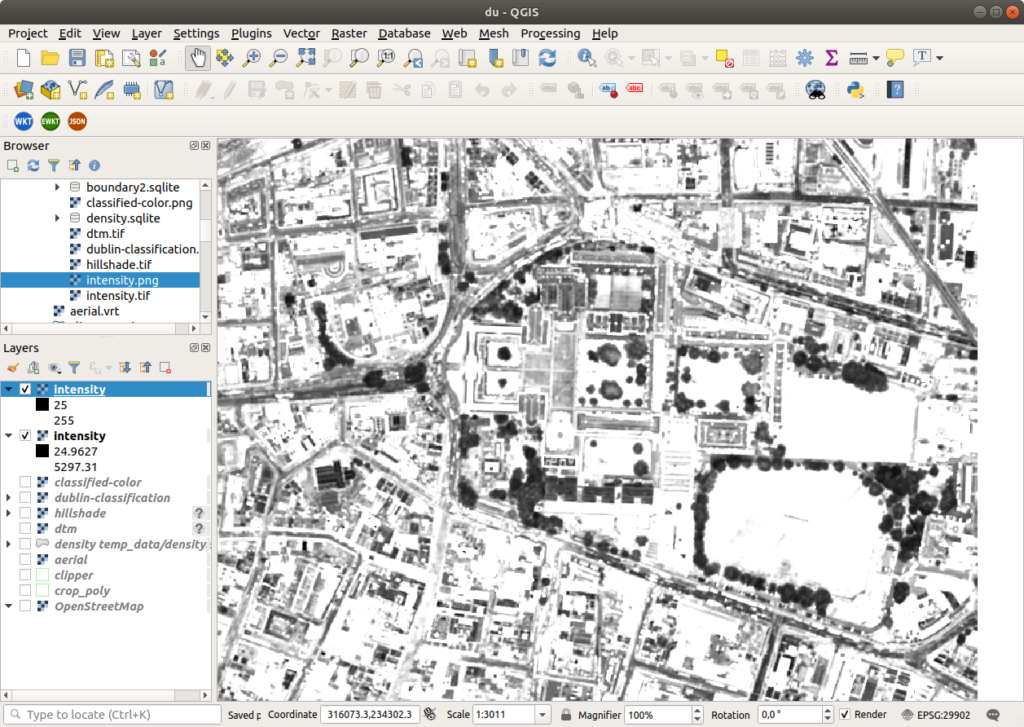

virtual layer in qgis

{

"pipeline": [

"temp_data/dublin-colored.laz",

{

"type": "filters.colorization",

"raster": "aerial.vrt"

},

{

"type": "filters.range",

"limits": "Red[1:]"

},

{

"type": "writers.las",

"compression": "true",

"minor_version": "2",

"dataformat_id": "3",

"filename":"temp_data/dublin-colored.laz"

}

]

}pdal pipeline colorize.json

ls -lah temp_data/dublin-colored.laz(new_clouds) myuser@comp:~/dublin$ pdal pipeline colorize_folder.json

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

[...]

(new_clouds) myuser@comp:~/dublin$ vim colorize_folder.json

(new_clouds) myuser@comp:~/dublin$ ls -lah temp_data/dublin-colored.laz

-rw-r--r-- 1 myuser myuser 2,7G mar 5 20:09 temp_data/dublin-colored.laz

(new_clouds) myuser@comp:~/dublin$Now let’s try to visualize the LAZ file in Potree creating an Entwine EPT file.

Entwine (src)

We will use Entwine to create a new folder named ept_dublin, then rearrange points into a lossless octree structure known as EPT.

You can read this other post to find out how to install Entwine.

The build command is used to generate an Entwine Point Tile (EPT) dataset from point cloud data. Because we want to build the visualization from the merged and colored LAZ data, for input we provide the path to dublin-colored.laz to the command. We could also build directly from all files in raw_data folder, if we didn’t want the color version.

entwine build -i temp_data/dublin-colored.laz -o ept_dublin_colored --srs "EPSG:29902"

tree ept_dublin_colored/ | head -10

tree ept_dublin_colored/ | tail -10(new_clouds) myuser@comp:~/dublin$ entwine build -i temp_data/dublin-colored.laz -o ept_dublin_colored --srs "EPSG:29902"

Scanning input

1/1: temp_data/dublin-colored.laz

Entwine Version: 2.1.0

EPT Version: 1.0.0

Input:

File: temp_data/dublin-colored.laz

Total points: 528,506,762

Density estimate (per square unit): 352.339

Threads: [1, 7]

Output:

Path: ept_dublin_colored/

Data type: laszip

Hierarchy type: json

Sleep count: 2,097,152

Scale: 0.01

Offset: (315750, 234000, 138)

Metadata:

SRS: EPSG:29902

Bounds: [(314999, 233499, -110), (316501, 234501, 386)]

Cube: [(314998, 233248, -614), (316502, 234752, 890)]

Storing dimensions: [

X:int32, Y:int32, Z:int32, Intensity:uint16, ReturnNumber:uint8,

NumberOfReturns:uint8, ScanDirectionFlag:uint8,

EdgeOfFlightLine:uint8, Classification:uint8, ScanAngleRank:float,

UserData:uint8, PointSourceId:uint16, GpsTime:double, Red:uint16,

Green:uint16, Blue:uint16, OriginId:uint32

]

Build parameters:

Span: 128

Resolution 2D: 128 * 128 = 16,384

Resolution 3D: 128 * 128 * 128 = 2,097,152

Maximum node size: 65,536

Minimum node size: 16,384

Cache size: 64

Adding 0 - temp_data/dublin-colored.laz

Pushes complete - joining...

00:10 - 1% - 6,807,552 - 2,450(2,450)M/h - 0W - 0R - 172A

00:20 - 2% - 12,521,472 - 2,253(2,057)M/h - 85W - 9R - 229A

[...]

59:40 - 65% - 344,825,856 - 346(97)M/h - 235W - 60R - 576A

59:50 - 65% - 345,542,656 - 346(258)M/h - 0W - 81R - 673A

01:00:00 - 65% - 346,095,616 - 346(199)M/h - 0W - 97R - 782A

01:00:10 - 66% - 346,701,824 - 345(218)M/h - 60W - 99R - 840A

01:00:20 - 66% - 347,009,024 - 345(110)M/h - 303W - 42R - 578A

[...]

01:36:40 - 100% - 527,835,136 - 327(148)M/h - 24W - 56R - 589A

01:36:50 - 100% - 528,506,762 - 327(241)M/h - 66W - 75R - 618A

Done 0

Reawakened: 40810

Saving registry...

Saving metadata...

Index completed in 01:36:57.

Save complete.

Points inserted: 528,506,762

(new_clouds) myuser@comp:~/dublin$ tree ept_dublin_colored/ | head -10

ept_dublin_colored/

├── ept-build.json

├── ept-data

│ ├── 0-0-0-0.laz

│ ├── 1-0-0-0.laz

│ ├── 1-0-1-0.laz

│ ├── 1-1-0-0.laz

│ ├── 1-1-1-0.laz

│ ├── 2-0-0-1.laz

│ ├── 2-0-1-1.laz

(new_clouds) myuser@comp:~/dublin$ tree ept_dublin_colored/ | tail -10

│ ├── 8-231-115-104.laz

│ └── 8-244-129-105.laz

├── ept-hierarchy

│ └── 0-0-0-0.json

├── ept.json

└── ept-sources

├── 0.json

└── list.json

3 directories, 13109 files

(new_clouds) myuser@comp:~/dublin$Now we can statically serve the entwine directory with an HTTP server and visualize it with the WebGL-based Potree and Plasio projects.

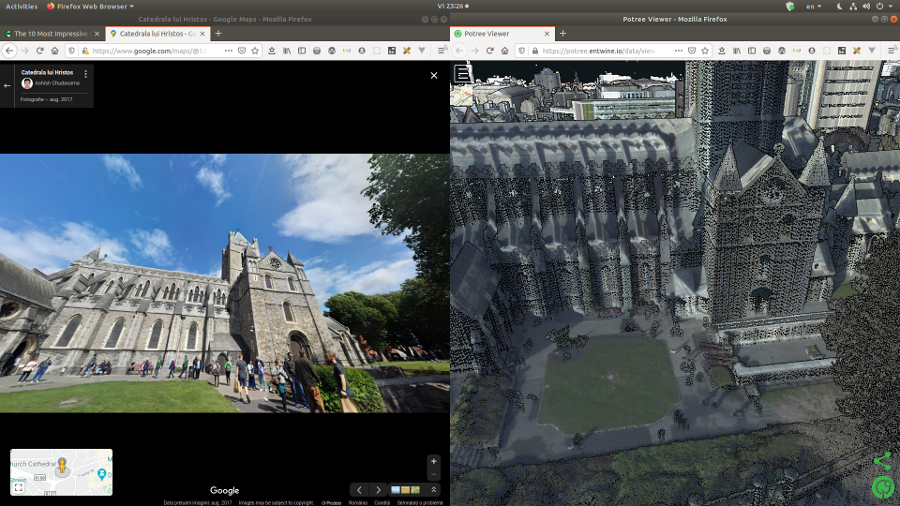

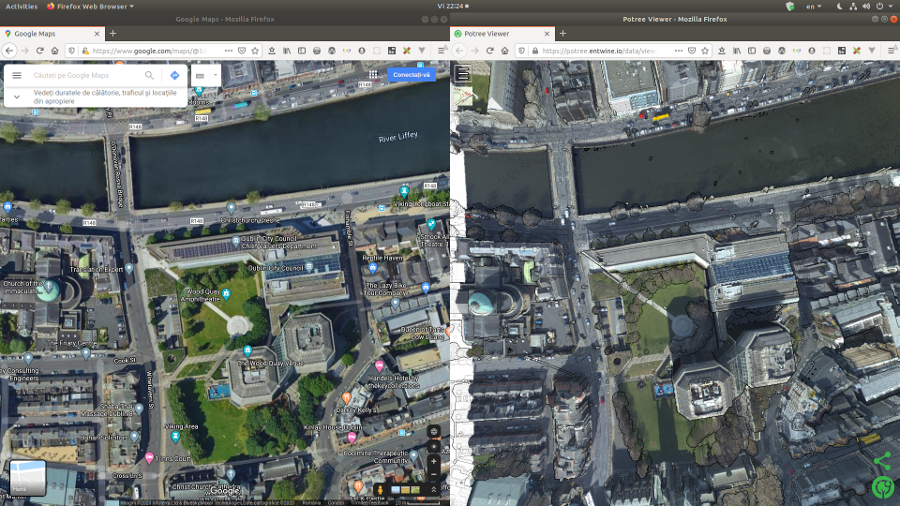

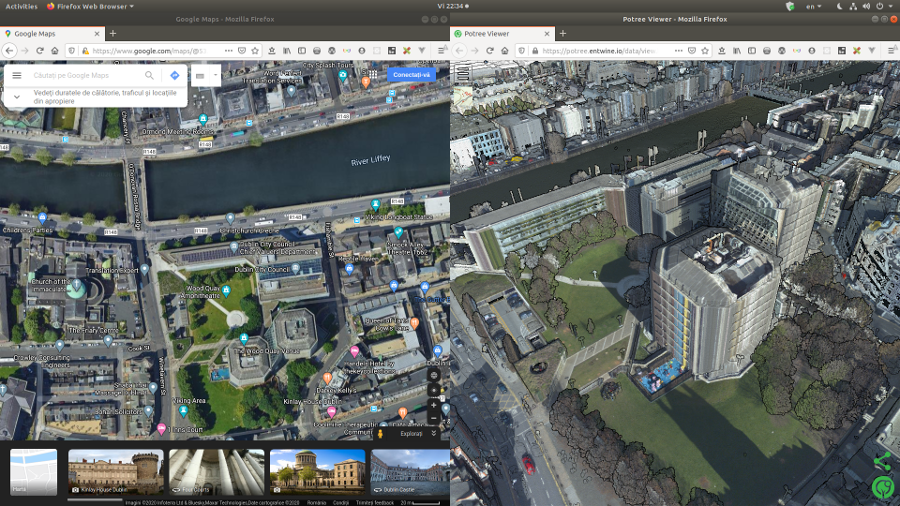

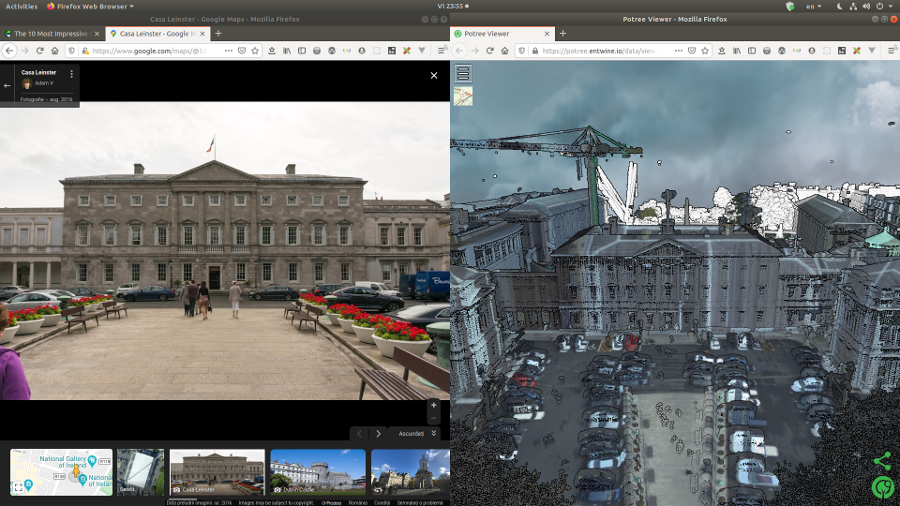

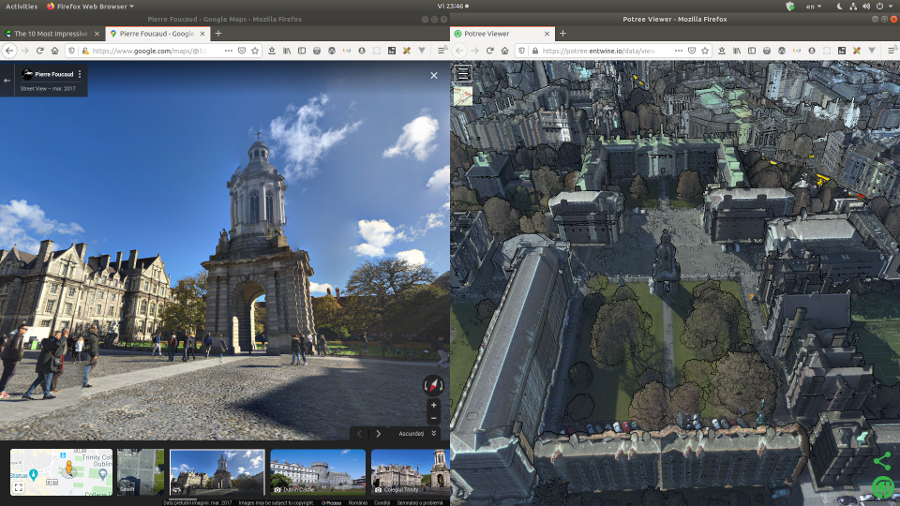

Potree and Plasio

We can visualize our data in Potree and Plasio, directly in the browser.

# Install NodeJS and http server from nodejs

conda install nodejs -y

npm install http-server -g

# Make sure you are in the working folder, and the EPT folder

# is a subfolder

# Start the server using --cors to allow serving data to the

# remote Potree/Plasio pages

http-server -p 8066 --cors(new_clouds) myuser@comp:~/dublin$ http-server -p 8066 --cors

Starting up http-server, serving ./

Available on:

http://127.0.0.1:8066

http://192.168.0.102:8066

http://192.168.63.1:8066

http://172.18.0.1:8066

http://192.168.61.1:8066

Hit CTRL-C to stop the server# In another tab (Shift+Ctrl+T), visualise out local point cloud data in Potree

xdg-open "http://potree.entwine.io/data/view.html?r=http://localhost:8066/ept_dublin_colored"

initial view

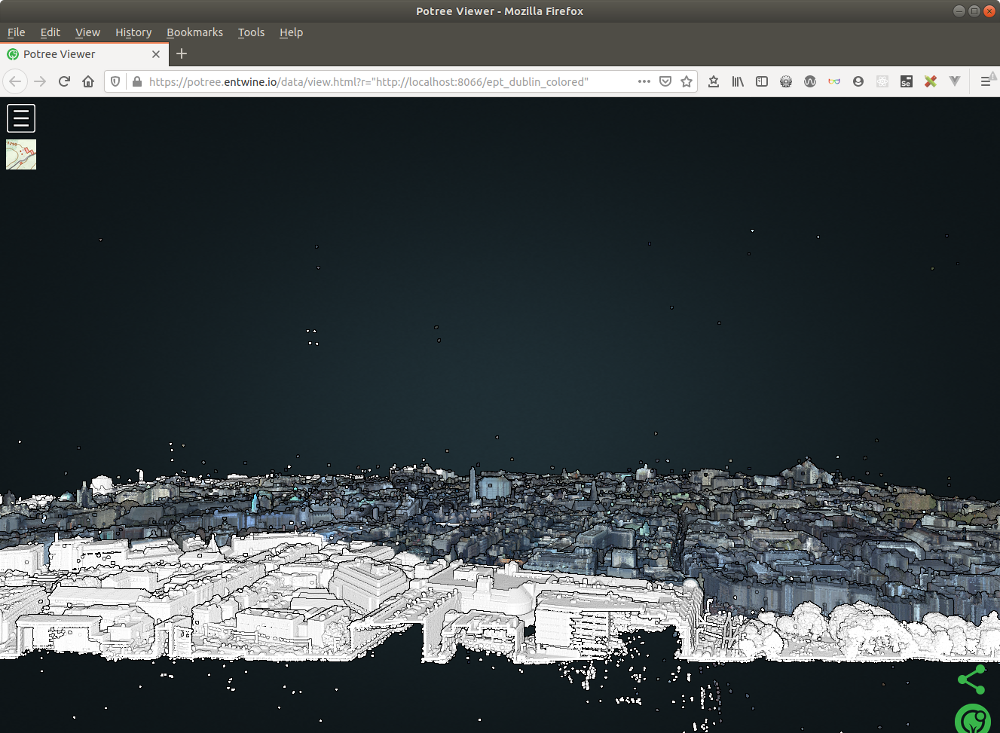

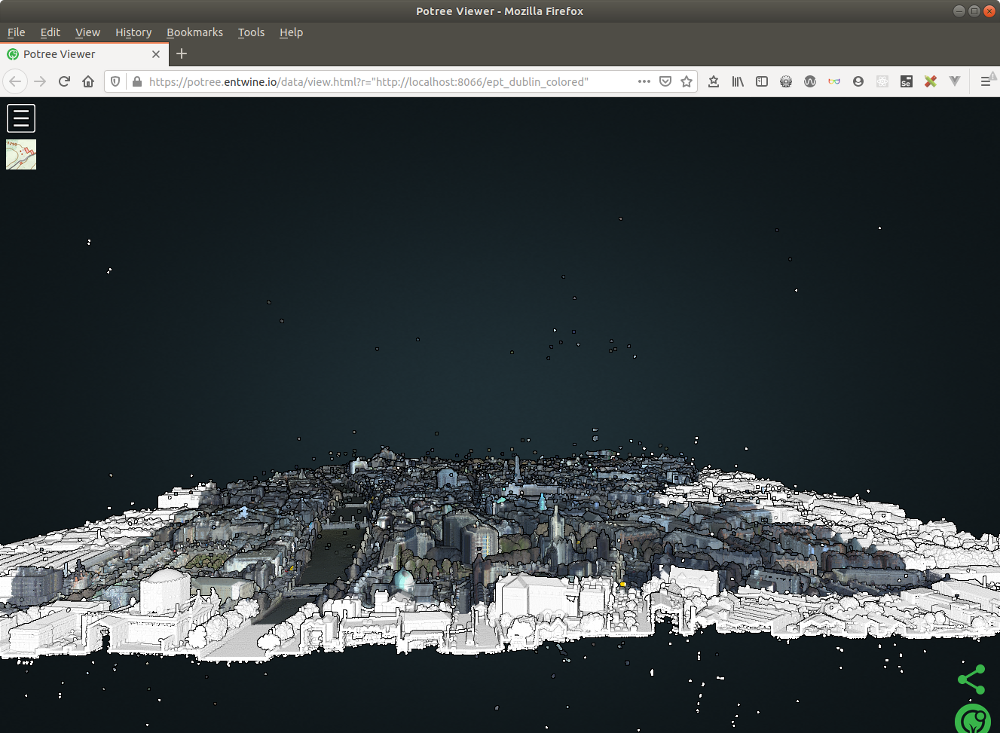

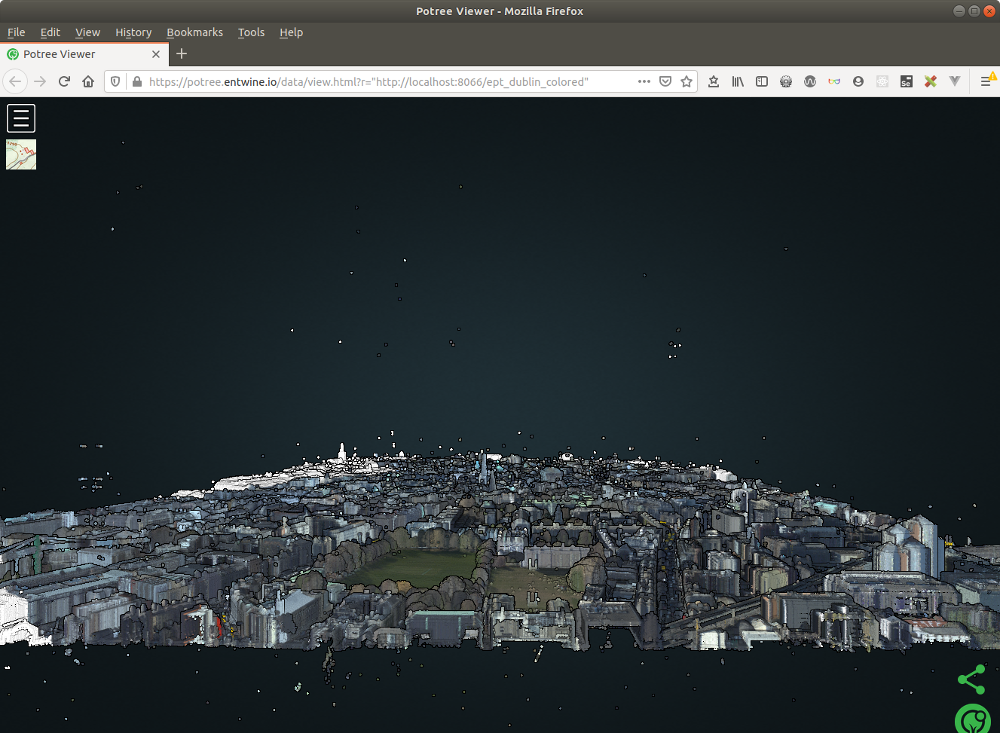

top view

back view

front view

left view

right view

christ church

christ church

city council

city council

dublin castle

dublin castle

dublin castle

national parliament

national parliament

national parliament

trinity college

trinity college

Assign a colour scale to the points based on elevation, classification and other criteria, then play with some terrain profiles.

The commonly used versions of the LAS format (1.2 and 1.3) have 8 classification categories pre-defined and can handle up to 32. For 1.4 see this document.

Visualize the points by point classification:

LiDAR data can contain significantly more information than x, y, and z values and may include, among others, the intensity of the returns, the point classification (if done), the number of returns, the time, and the source (flight line) of each point.

Intensity can be used as a substitute for aerial imagery when none is available.

Visualize the points by other attributes (intensity data, return number). We compare our views with Google Maps data.

Visualize the points in Plasio, too.

# Visualise our local point cloud data in Plasio

xdg-open "http://dev.speck.ly/?s=0&r=ept://localhost:8066/ept_dublin&c0s=local://color"Finding the boundary (src)

Optionally, you can create a JSON file named entwine.json, containing the above code, then convert from EPT to LAZ using the pdal pipeline command. I did that in the other experiments, because I had the data merged in the EPT file, and not in a LAZ file like dublin-colored.laz.

{

"pipeline": [

{

"type": "readers.ept",

"filename":"ept_dublin_colored",

"resolution": 0.001

},

{

"type": "writers.las",

"compression": "true",

"minor_version": "2",

"dataformat_id": "0",

"filename":"temp_data/dublin-colored.laz"

}

]

}_pdal pipeline entwine.json -v 7

pdal info temp_data/dublin-colored.laz | jq .stats.bbox.native.bbox

pdal info temp_data/dublin-colored.laz -p 0Skip that, and let’s get the boundary of the fitting polygon for dublin-colored.laz as WKT or JSON.

pdal info temp_data/dublin-colored.laz --boundary(new_clouds) myuser@comp:~/dublin$ pdal info temp_data/dublin-colored.laz --boundary

{

"boundary":

{

"area": 1516645.387,

"avg_pt_per_sq_unit": 0.008613751126,

"avg_pt_spacing": 0.05356939606,

"boundary": "POLYGON ((315025.03 233493.85,315030.13 233498.27,315048.01 233498.27,315053.11 233493.85,315086.3 233493.85,315091.41 233498.27,315101.62 233498.27,315106.73 233493.85,315114.39 233498.27,315139.92 233498.27,315145.03 233493.85,315193.54 233493.85,315198.64 233498.27,315206.3 233493.85,315213.96 233498.27,315224.17 233498.27,315229.28 233493.85,315262.47 233493.85,315267.58 233498.27,315277.79 233498.27,315282.9 233493.85,315362.04 233493.85,315367.15 233498.27,315374.81 233493.85,315415.66 233493.85,315420.77 233498.27,315453.96 233498.27,315459.06 233493.85,315476.94 233493.85,315482.04 233498.27,315489.7 233493.85,315530.55 233493.85,315535.66 233498.27,315543.32 233493.85,315568.85 233493.85,315573.95 233498.27,315581.61 233493.85,315589.27 233498.27,315596.93 233493.85,315706.72 233493.85,315711.82 233498.27,315719.48 233493.85,315729.7 233493.85,315734.8 233498.27,315742.46 233493.85,315767.99 233493.85,315773.1 233498.27,315783.31 233498.27,315788.42 233493.85,315796.08 233498.27,315803.74 233493.85,315811.4 233498.27,315819.06 233493.85,315928.84 233493.85,315933.95 233498.27,315941.61 233493.85,316166.29 233493.85,316171.39 233498.27,316181.6 233498.27,316186.71 233493.85,316242.88 233493.85,316247.99 233498.27,316258.2 233498.27,316263.3 233493.85,316273.52 233493.85,316278.62 233498.27,316286.28 233493.85,316293.94 233498.27,316301.6 233493.85,316311.81 233493.85,316316.92 233498.27,316324.58 233493.85,316342.45 233493.85,316347.56 233498.27,316355.22 233493.85,316373.09 233493.85,316378.2 233498.27,316385.86 233493.85,316396.07 233493.85,316401.17 233498.27,316408.83 233493.85,316416.49 233498.27,316424.15 233493.85,316472.66 233493.85,316477.77 233498.27,316487.98 233498.27,316495.64 233493.85,316503.3 233498.27,316503.3 234502.1,314999.5 234502.1,314998.22 233500.48,315003.33 233496.06,315017.37 233498.27,315025.03 233493.85))",

"boundary_json": { "type": "Polygon", "coordinates": [ [ [ 315025.027875099971425, 233493.846726599993417 ], [ 315030.134171339974273, 233498.268908869998995 ], [ 315048.006208200007677, 233498.268908869998995 ], [ 315053.112504450022243, 233493.846726599993417 ], [ 315086.303430049971212, 233493.846726599993417 ], [ 315091.40972628997406, 233498.268908869998995 ], [ 315101.622318779991474, 233498.268908869998995 ], [ 315106.72861503000604, 233493.846726599993417 ], [ 315114.38805940002203, 233498.268908869998995 ], [ 315139.919540620001499, 233498.268908869998995 ], [ 315145.025836870016064, 233493.846726599993417 ], [ 315193.535651199985296, 233493.846726599993417 ], [ 315198.641947449999861, 233498.268908869998995 ], [ 315206.301391820015851, 233493.846726599993417 ], [ 315213.960836189973634, 233498.268908869998995 ], [ 315224.173428679991048, 233498.268908869998995 ], [ 315229.279724919993896, 233493.846726599993417 ], [ 315262.470650520001072, 233493.846726599993417 ], [ 315267.576946760003921, 233498.268908869998995 ], [ 315277.789539259974845, 233498.268908869998995 ], [ 315282.895835499977693, 233493.846726599993417 ], [ 315362.043427310010884, 233493.846726599993417 ], [ 315367.149723550013732, 233498.268908869998995 ], [ 315374.809167919971514, 233493.846726599993417 ], [ 315415.659537889994681, 233493.846726599993417 ], [ 315420.765834129997529, 233498.268908869998995 ], [ 315453.956759730004705, 233498.268908869998995 ], [ 315459.063055970007554, 233493.846726599993417 ], [ 315476.93509282998275, 233493.846726599993417 ], [ 315482.041389079997316, 233498.268908869998995 ], [ 315489.700833450013306, 233493.846726599993417 ], [ 315530.551203410024755, 233493.846726599993417 ], [ 315535.657499659981113, 233498.268908869998995 ], [ 315543.316944029997103, 233493.846726599993417 ], [ 315568.848425249976572, 233493.846726599993417 ], [ 315573.954721499991138, 233498.268908869998995 ], [ 315581.614165870007128, 233493.846726599993417 ], [ 315589.273610240023118, 233498.268908869998995 ], [ 315596.93305460002739, 233493.846726599993417 ], [ 315706.718423890008125, 233493.846726599993417 ], [ 315711.824720130010974, 233498.268908869998995 ], [ 315719.484164500026964, 233493.846726599993417 ], [ 315729.69675698998617, 233493.846726599993417 ], [ 315734.803053240000736, 233498.268908869998995 ], [ 315742.462497600005008, 233493.846726599993417 ], [ 315767.993978829996195, 233493.846726599993417 ], [ 315773.100275080010761, 233498.268908869998995 ], [ 315783.312867570028175, 233498.268908869998995 ], [ 315788.419163809972815, 233493.846726599993417 ], [ 315796.078608179988805, 233498.268908869998995 ], [ 315803.738052550004795, 233493.846726599993417 ], [ 315811.397496920020785, 233498.268908869998995 ], [ 315819.056941289978568, 233493.846726599993417 ], [ 315928.842310570005793, 233493.846726599993417 ], [ 315933.948606810008641, 233498.268908869998995 ], [ 315941.608051180024631, 233493.846726599993417 ], [ 316166.285085989977233, 233493.846726599993417 ], [ 316171.391382229980081, 233498.268908869998995 ], [ 316181.603974719997495, 233498.268908869998995 ], [ 316186.710270970012061, 233493.846726599993417 ], [ 316242.879529669997282, 233493.846726599993417 ], [ 316247.985825920011848, 233498.268908869998995 ], [ 316258.198418409971055, 233498.268908869998995 ], [ 316263.304714649973903, 233493.846726599993417 ], [ 316273.517307150003035, 233493.846726599993417 ], [ 316278.623603390005883, 233498.268908869998995 ], [ 316286.283047760021873, 233493.846726599993417 ], [ 316293.942492129979655, 233498.268908869998995 ], [ 316301.601936499995645, 233493.846726599993417 ], [ 316311.814528990013059, 233493.846726599993417 ], [ 316316.920825230015907, 233498.268908869998995 ], [ 316324.58026959997369, 233493.846726599993417 ], [ 316342.452306460007094, 233493.846726599993417 ], [ 316347.55860271002166, 233498.268908869998995 ], [ 316355.218047070025932, 233493.846726599993417 ], [ 316373.090083930001128, 233493.846726599993417 ], [ 316378.196380180015694, 233498.268908869998995 ], [ 316385.855824549973477, 233493.846726599993417 ], [ 316396.068417039990891, 233493.846726599993417 ], [ 316401.174713290005457, 233498.268908869998995 ], [ 316408.834157650009729, 233493.846726599993417 ], [ 316416.493602020025719, 233498.268908869998995 ], [ 316424.153046389983501, 233493.846726599993417 ], [ 316472.66286072001094, 233493.846726599993417 ], [ 316477.769156970025506, 233498.268908869998995 ], [ 316487.981749459984712, 233498.268908869998995 ], [ 316495.641193830000702, 233493.846726599993417 ], [ 316503.300638200016692, 233498.268908869998995 ], [ 316503.300638200016692, 234502.104283939988818 ], [ 314999.496393869980238, 234502.104283939988818 ], [ 314998.21981981000863, 233500.480000000010477 ], [ 315003.326116060023196, 233496.057817730004899 ], [ 315017.368430730013642, 233498.268908869998995 ], [ 315025.027875099971425, 233493.846726599993417 ] ] ] },

"density": 348.4708861,

"edge_length": 0,

"estimated_edge": 4.422182269,

"hex_offsets": "MULTIPOINT (0 0, -1.27657 2.21109, 0 4.42218, 2.55315 4.42218, 3.82972 2.21109, 2.55315 0)",

"sample_size": 5000,

"threshold": 15

},

"filename": "temp_data/dublin-colored.laz",

"pdal_version": "2.0.1 (git-version: 6600e6)"

}

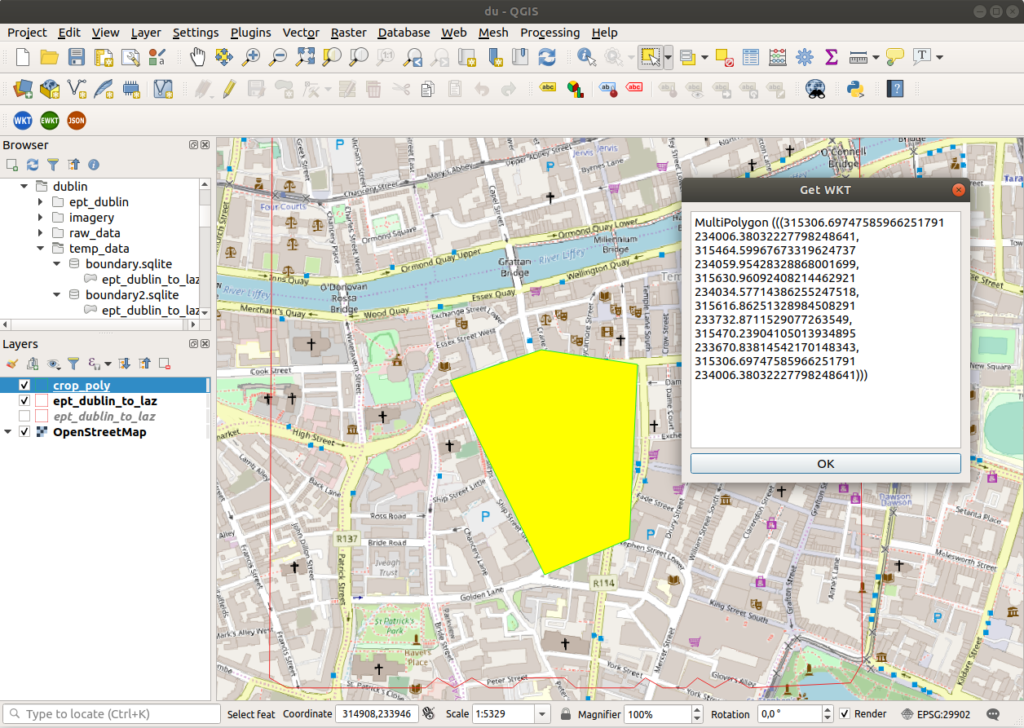

(new_clouds) myuser@comp:~/dublin$Get a vector geometry file using pdal tindex, the “tile index” command. Then view the file in QGIS.

# simple tindex

pdal tindex create --tindex temp_data/boundary.sqlite \

--filespec temp_data/dublin-colored.laz \

--t_srs "EPSG:29902" \

-f SQLite

# set edge size for output

pdal tindex create --tindex temp_data/boundary2.sqlite \

--filespec temp_data/dublin-colored.laz \

--filters.hexbin.edge_size=10 \

--filters.hexbin.threshold=1 \

--t_srs "EPSG:29902" \

-f SQLiteOr use Hexbin to output the boundary of actual points in point buffer, not just rectangular extents. Create a file named hexbin-pipeline.json and populate it with the code below, then run pdal pipeline.

[

"temp_data/dublin-colored.laz",

{

"type" : "filters.hexbin"

}

]tree -L 1

pdal pipeline hexbin-pipeline.json --metadata hexbin-out.json

pdal info --boundary temp_data/dublin-colored.laz(new_clouds) myuser@comp:~/dublin$ pdal info --boundary temp_data/dublin-colored.laz

{

"boundary":

{

"area": 7113155.893,

"avg_pt_per_sq_unit": 0.06273320804,

"avg_pt_spacing": 0.144606602,

"boundary": "POLYGON ((316291.86 232076.82,316291.86 234942.76,314223.56 235659.24,314223.56 232793.31,316291.86 232076.82))",

"boundary_json": { "type": "Polygon", "coordinates": [ [ [ 316291.863240830018185, 232076.824112250003964 ], [ 316291.863240830018185, 234942.755887750012334 ], [ 314223.555138760013506, 235659.238831630005734 ], [ 314223.555138760013506, 232793.307056119985646 ], [ 316291.863240830018185, 232076.824112250003964 ] ] ] },

"density": 47.82156206,

"edge_length": 0,

"estimated_edge": 1432.965888,

"hex_offsets": "MULTIPOINT (0 0, -413.662 716.483, 0 1432.97, 827.323 1432.97, 1240.98 716.483, 827.323 0)",

"sample_size": 5000,

"threshold": 15

},

"filename": "temp_data/dublin-colored.laz",

"pdal_version": "2.0.1 (git-version: 6600e6)"

}

(new_clouds) myuser@comp:~/dublin$Cropping

pdal translate -i temp_data/dublin-colored.laz \

-o temp_data/ept_dublin_cropped.laz \

--readers.las.spatialreference="EPSG:29902" -f crop \

--filters.crop.polygon="MultiPolygon (((315306.69747585966251791 234006.38032227798248641, 315464.59967673319624737 234059.95428328868001699, 315630.96092408214462921 234034.57714386255247518, 315616.86251328984508291 233732.8711529077263549, 315470.23904105013934895 233670.83814542170148343, 315306.69747585966251791 234006.38032227798248641)))"

pdal info temp_data/ept_dublin_cropped.laz --boundary(new_clouds) myuser@comp:~/dublin$ pdal translate -i temp_data/dublin-colored.laz \

> -o temp_data/ept_dublin_cropped.laz \

> --readers.las.spatialreference="EPSG:29902" -f crop \

> --filters.crop.polygon="MultiPolygon (((315306.69747585966251791 234006.38032227798248641, 315464.59967673319624737 234059.95428328868001699, 315630.96092408214462921 234034.57714386255247518, 315616.86251328984508291 233732.8711529077263549, 315470.23904105013934895 233670.83814542170148343, 315306.69747585966251791 234006.38032227798248641)))"

(new_clouds) myuser@comp:~/dublin$ pdal info temp_data/ept_dublin_cropped.laz --boundary

{

"boundary":

{

"area": 2226527.833,

"avg_pt_per_sq_unit": 14.10215304,

"avg_pt_spacing": 2.168113852,

"boundary": "POLYGON ((316005.52 232786.05,316272.75 234174.66,315471.04 234637.53,314402.09 233711.79,316005.52 232786.05))",

"boundary_json": { "type": "Polygon", "coordinates": [ [ [ 316005.515120149997529, 232786.051936289994046 ], [ 316272.752680230012629, 234174.659031860006507 ], [ 315471.039999999979045, 234637.528063709993148 ], [ 314402.089759700000286, 233711.790000000008149 ], [ 316005.515120149997529, 232786.051936289994046 ] ] ] },

"density": 0.2127334736,

"edge_length": 0,

"estimated_edge": 925.7380637,

"hex_offsets": "MULTIPOINT (0 0, -267.238 462.869, 0 925.738, 534.475 925.738, 801.713 462.869, 534.475 0)",

"sample_size": 5000,

"threshold": 15

},

"filename": "temp_data/ept_dublin_cropped.laz",

"pdal_version": "2.0.1 (git-version: 6600e6)"

}

(new_clouds) myuser@comp:~/dublin$

get wkt from polygon in qgis

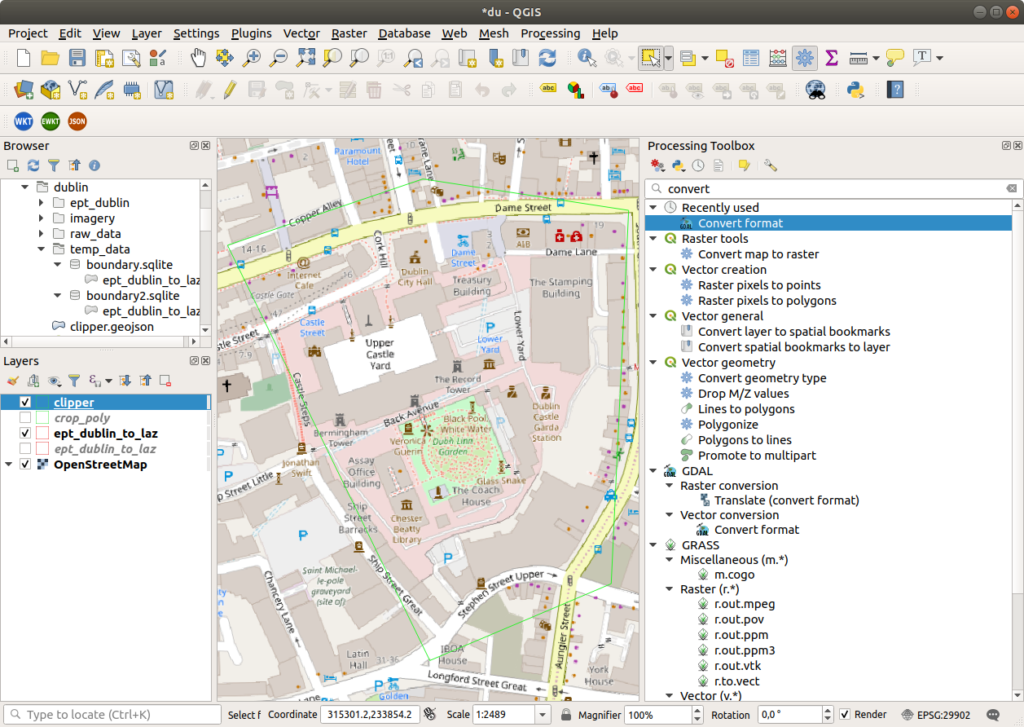

Clipping data with polygons (src)

TODO

{

"pipeline": [

"temp_data/dublin-colored.laz",

{

"column": "CLS",

"datasource": "clipper.geojson",

"dimension": "Classification",

"type": "filters.overlay"

},

{

"limits": "Classification[6:6]",

"type": "filters.range"

},

"temp_data/ept_dublin_clipped.laz"

]

}pdal pipeline clipping.json --nostream

Convert to geojson in qgis

Removing noise (src)

Use PDAL to remove unwanted noise. Create a file named denoise.json and populate it with the following code, then run pdal pipeline.

{

"pipeline": [

"raw_data/T_315000_233500.laz",

{

"type": "filters.outlier",

"method": "statistical",

"multiplier": 3,

"mean_k": 8

},

{

"type": "filters.range",

"limits": "Classification![7:7],Z[-100:3000]"

},

{

"type": "writers.las",

"compression": "true",

"minor_version": "2",

"dataformat_id": "0",

"filename":"temp_data/clean.laz"

}

]

}pdal pipeline denoise.json

ls -lah temp_data/clean.laz(new_clouds) myuser@comp:~/dublin$ pdal pipeline denoise.json

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

(new_clouds) myuser@comp:~/dublin$ ls -lah temp_data/clean.laz

-rw-r--r-- 1 myuser myuser 246M mar 6 00:38 temp_data/clean.laz

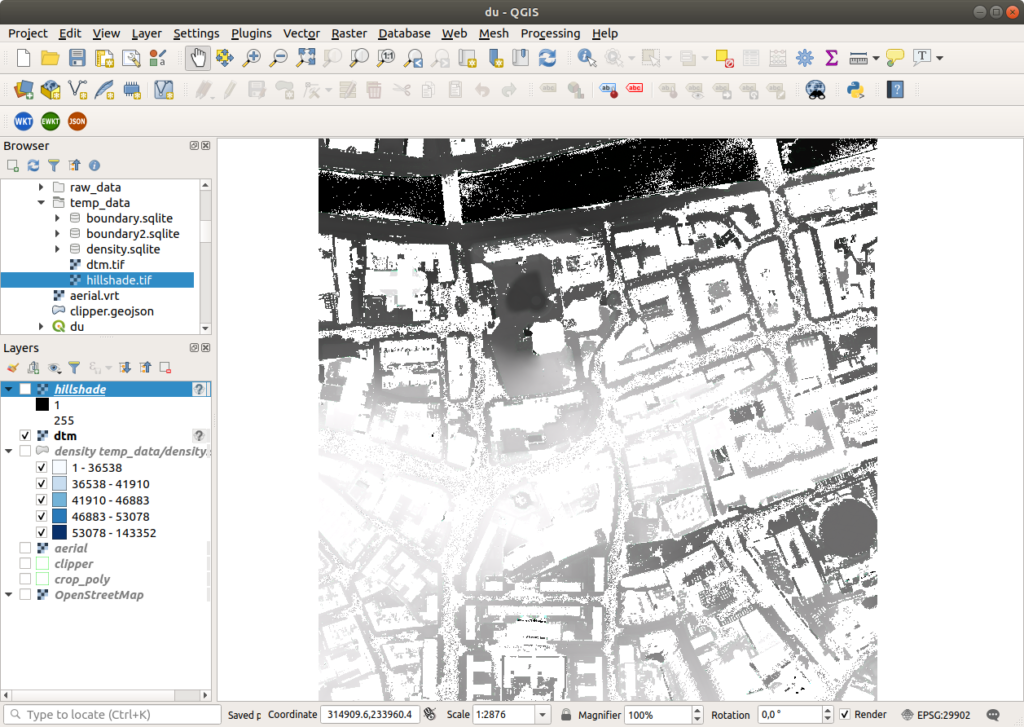

(new_clouds) myuser@comp:~/dublin$Visualizing acquisition density (src)

Generate a density surface to summarize acquisition quality, and visualize it in QGIS, applying a gradient color ramp and using the count attribute.

pdal density raw_data/T_315000_233500.laz \

-o temp_data/density.sqlite \

-f SQLiteThinning (src)

Subsample or thin point cloud data, to accelerate processing (less data), normalize point density, or ease visualization.

pdal translate raw_data/T_315000_233500.laz \

temp_data/thin.laz \

sample --filters.sample.radius=20

ls -lah temp_data/thin.laz(new_clouds) myuser@comp:~/dublin$ pdal translate raw_data/T_315000_233500.laz \

> temp_data/thin.laz \

> sample --filters.sample.radius=20

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

(new_clouds) myuser@comp:~/dublin$ ls -lah temp_data/thin.laz

-rw-r--r-- 1 myuser myuser 13K mar 6 00:51 temp_data/thin.laz

(new_clouds) myuser@comp:~/dublin$Creating tiles (tile)

Before running the next commands, we will have to split the files into tiles. Then run the command in batches, because we can run out of memory if the file was too large, with the error PDAL: std::bad_allocfor example.

We will use dublin-colored.laz.

And now we create LAZ tiles using the pdal tile command. If we don’t specify --length, each output file contains points in the 1000×1000 square units represented by the tile.

# Create the folder for tiles

mkdir -pv laz_tiles

# Create the tiles from all files in the specified folder

pdal tile -i "temp_data/dublin-colored.laz" \

-o "laz_tiles/dublin_#.laz" \

--buffer="20" \

--out_srs="EPSG:29902"

# List the files in the newly created folder

ls -la laz_tiles | head -10

ls -la laz_tiles | tail -10(new_clouds) myuser@comp:~/dublin$ mkdir -pv laz_tiles

(new_clouds) myuser@comp:~/dublin$ # Create the tiles from all files in the specified folder

(new_clouds) myuser@comp:~/dublin$ pdal tile -i "temp_data/dublin-colored.laz" \

> -o "laz_tiles/dublin_#.laz" \

> --buffer="20" \

> --out_srs="EPSG:29902"

(new_clouds) myuser@comp:~/dublin$ # List the files in the newly created folder

(new_clouds) myuser@comp:~/dublin$ ls -la laz_tiles | head -10

total 3006672

drwxr-xr-x 2 myuser myuser 4096 mar 6 01:10 .

drwxrwxr-x 8 myuser myuser 4096 mar 6 00:54 ..

-rw-r--r-- 1 myuser myuser 2081807749 mar 6 01:14 dublin_0_0.laz

-rw-r--r-- 1 myuser myuser 40442660 mar 6 01:14 dublin_0_-1.laz

-rw-r--r-- 1 myuser myuser 31597905 mar 6 01:14 dublin_0_1.laz

-rw-r--r-- 1 myuser myuser 863749935 mar 6 01:14 dublin_-1_0.laz

-rw-r--r-- 1 myuser myuser 37421064 mar 6 01:14 dublin_1_0.laz

-rw-r--r-- 1 myuser myuser 12858789 mar 6 01:14 dublin_-1_-1.laz

-rw-r--r-- 1 myuser myuser 9801056 mar 6 01:14 dublin_-1_1.laz

(new_clouds) myuser@comp:~/dublin$ ls -la laz_tiles | tail -10

drwxrwxr-x 8 myuser myuser 4096 mar 6 00:54 ..

-rw-r--r-- 1 myuser myuser 2081807749 mar 6 01:14 dublin_0_0.laz

-rw-r--r-- 1 myuser myuser 40442660 mar 6 01:14 dublin_0_-1.laz

-rw-r--r-- 1 myuser myuser 31597905 mar 6 01:14 dublin_0_1.laz

-rw-r--r-- 1 myuser myuser 863749935 mar 6 01:14 dublin_-1_0.laz

-rw-r--r-- 1 myuser myuser 37421064 mar 6 01:14 dublin_1_0.laz

-rw-r--r-- 1 myuser myuser 12858789 mar 6 01:14 dublin_-1_-1.laz

-rw-r--r-- 1 myuser myuser 9801056 mar 6 01:14 dublin_-1_1.laz

-rw-r--r-- 1 myuser myuser 454185 mar 6 01:14 dublin_1_-1.laz

-rw-r--r-- 1 myuser myuser 637080 mar 6 01:14 dublin_1_1.laz

(new_clouds) myuser@comp:~/dublin$Identifying ground (src)

You can classify ground returns using the Simple Morphological Filter (SMRF) technique.

pdal translate raw_data/T_315000_233500.laz \

-o temp_data/ground.laz \

smrf \

-v 4To aquire a more accurate representation of the ground returns, you can remove the non-ground data to just view the ground data, then use the translate command to stack the filters.outlier and filters.smrf.

pdal translate \

raw_data/T_315000_233500.laz \

-o temp_data/ground.laz \

smrf range \

--filters.range.limits="Classification[2:2]" \

-v 4Let’s run only the next command, to spare some time, usually this process lasts longer.

pdal translate raw_data/T_315000_233500.laz \

-o temp_data/denoised_ground.laz \

outlier smrf range \

--filters.outlier.method="statistical" \

--filters.outlier.mean_k=8 --filters.outlier.multiplier=3.0 \

--filters.smrf.ignore="Classification[7:7]" \

--filters.range.limits="Classification[2:2]" \

--writers.las.compression=true \

--verbose 4(new_clouds) myuser@comp:~/dublin$ pdal translate raw_data/T_315000_233500.laz \

> -o temp_data/denoised_ground.laz \

> outlier smrf range \

> --filters.outlier.method="statistical" \

> --filters.outlier.mean_k=8 --filters.outlier.multiplier=3.0 \

> --filters.smrf.ignore="Classification[7:7]" \

> --filters.range.limits="Classification[2:2]" \

> --writers.las.compression=true \

> --verbose 4

(PDAL Debug) Debugging...

proj_create_from_database: datum not found

proj_create_from_database: ellipsoid not found

proj_create_from_database: prime meridian not found

(pdal translate Debug) Executing pipeline in standard mode.

(pdal translate filters.smrf Debug) progressiveFilter: radius = 1 246873 ground 3127 non-ground (1.25%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 1 217461 ground 32539 non-ground (13.02%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 2 197553 ground 52447 non-ground (20.98%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 3 182276 ground 67724 non-ground (27.09%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 4 170944 ground 79056 non-ground (31.62%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 5 161703 ground 88297 non-ground (35.32%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 6 153674 ground 96326 non-ground (38.53%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 7 146785 ground 103215 non-ground (41.29%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 8 143205 ground 106795 non-ground (42.72%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 9 140842 ground 109158 non-ground (43.66%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 10 139423 ground 110577 non-ground (44.23%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 11 138136 ground 111864 non-ground (44.75%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 12 137225 ground 112775 non-ground (45.11%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 13 136792 ground 113208 non-ground (45.28%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 14 136497 ground 113503 non-ground (45.40%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 15 136386 ground 113614 non-ground (45.45%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 16 136140 ground 113860 non-ground (45.54%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 17 136119 ground 113881 non-ground (45.55%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 18 135954 ground 114046 non-ground (45.62%)

(pdal translate writers.las Debug) Wrote 25290587 points to the LAS file

(new_clouds) myuser@comp:~/dublin$Now do the same for all the files in laz_tiles, providing the input file name and the output file name on the fly in batch processing. Parallel, as its name implies, allows paralell operations. After that, merge all the resulting tiles in a new LAZ file named denoised_grnd_f.laz.

mkdir -pv denoised_grnd

ls laz_tiles/*.laz | \

parallel -I{} pdal translate {} \

-o denoised_grnd/denoised_grnd{/.}.laz \

outlier smrf range \

--filters.outlier.method="statistical" \

--filters.outlier.mean_k=8 --filters.outlier.multiplier=3.0 \

--filters.smrf.ignore="Classification[7:7]" \

--filters.range.limits="Classification[2:2]" \

--writers.las.compression=true \

--verbose 4

ls -la denoised_grnd/ | tail -10

pdal merge denoised_grnd/*.laz denoised_grnd_f.laz

du -h denoised_grnd_f.laz(new_clouds) myuser@comp:~/dublin$ mkdir -pv denoised_grnd

mkdir: created directory 'denoised_grnd'

(new_clouds) myuser@comp:~/dublin$ ls laz_tiles/*.laz | \

> parallel -I{} pdal translate {} \

> -o denoised_grnd/denoised_grnd{/.}.laz \

> outlier smrf range \

> --filters.outlier.method="statistical" \

> --filters.outlier.mean_k=8 --filters.outlier.multiplier=3.0 \

> --filters.smrf.ignore="Classification[7:7]" \

> --filters.range.limits="Classification[2:2]" \

> --writers.las.compression=true \

> --verbose 4

Academic tradition requires you to cite works you base your article on.

When using programs that use GNU Parallel to process data for publication

please cite:

O. Tange (2011): GNU Parallel - The Command-Line Power Tool,

;login: The USENIX Magazine, February 2011:42-47.

This helps funding further development; AND IT WON'T COST YOU A CENT.

If you pay 10000 EUR you should feel free to use GNU Parallel without citing.

To silence this citation notice: run 'parallel --citation'.

(PDAL Debug) Debugging...

(pdal translate Debug) Executing pipeline in standard mode.

(pdal translate filters.smrf Debug) progressiveFilter: radius = 1 390 ground 9 non-ground (2.26%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 1 325 ground 74 non-ground (18.55%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 2 289 ground 110 non-ground (27.57%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 3 236 ground 163 non-ground (40.85%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 4 215 ground 184 non-ground (46.12%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 5 202 ground 197 non-ground (49.37%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 6 202 ground 197 non-ground (49.37%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 7 200 ground 199 non-ground (49.87%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 8 145 ground 254 non-ground (63.66%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 9 126 ground 273 non-ground (68.42%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 10 126 ground 273 non-ground (68.42%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 11 126 ground 273 non-ground (68.42%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 12 126 ground 273 non-ground (68.42%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 13 126 ground 273 non-ground (68.42%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 14 126 ground 273 non-ground (68.42%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 15 126 ground 273 non-ground (68.42%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 16 126 ground 273 non-ground (68.42%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 17 126 ground 273 non-ground (68.42%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 18 126 ground 273 non-ground (68.42%)

(pdal translate writers.las Debug) Wrote 21580 points to the LAS file

(PDAL Debug) Debugging...

(pdal translate Debug) Executing pipeline in standard mode.

(pdal translate filters.smrf Debug) progressiveFilter: radius = 1 380 ground 0 non-ground (0.00%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 1 377 ground 3 non-ground (0.79%)

[...]

pdal translate filters.smrf Debug) progressiveFilter: radius = 15 284094 ground 238428 non-ground (45.63%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 16 283262 ground 239260 non-ground (45.79%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 17 282857 ground 239665 non-ground (45.87%)

(pdal translate filters.smrf Debug) progressiveFilter: radius = 18 281809 ground 240713 non-ground (46.07%)

(pdal translate writers.las Debug) Wrote 47101188 points to the LAS file

(new_clouds) myuser@comp:~/dublin$ ls -la denoised_grnd/ | tail -10

drwxr-xr-x 2 myuser myuser 4096 mar 6 02:03 .

drwxrwxr-x 9 myuser myuser 4096 mar 6 01:37 ..

-rw-r--r-- 1 myuser myuser 7600546 mar 6 01:39 denoised_grnddublin_0_-1.laz

-rw-r--r-- 1 myuser myuser 5872437 mar 6 01:38 denoised_grnddublin_0_1.laz

-rw-r--r-- 1 myuser myuser 221158620 mar 6 02:04 denoised_grnddublin_-1_0.laz

-rw-r--r-- 1 myuser myuser 8243806 mar 6 01:38 denoised_grnddublin_1_0.laz

-rw-r--r-- 1 myuser myuser 2419424 mar 6 01:38 denoised_grnddublin_-1_-1.laz

-rw-r--r-- 1 myuser myuser 1399477 mar 6 01:38 denoised_grnddublin_-1_1.laz

-rw-r--r-- 1 myuser myuser 82192 mar 6 01:37 denoised_grnddublin_1_-1.laz

-rw-r--r-- 1 myuser myuser 448281 mar 6 01:37 denoised_grnddublin_1_1.laz

(new_clouds) myuser@comp:~/dublin$ pdal merge denoised_grnd/*.laz denoised_grnd_f.laz

(new_clouds) myuser@comp:~/dublin$ du -h denoised_grnd_f.laz

236M denoised_grnd_f.laz

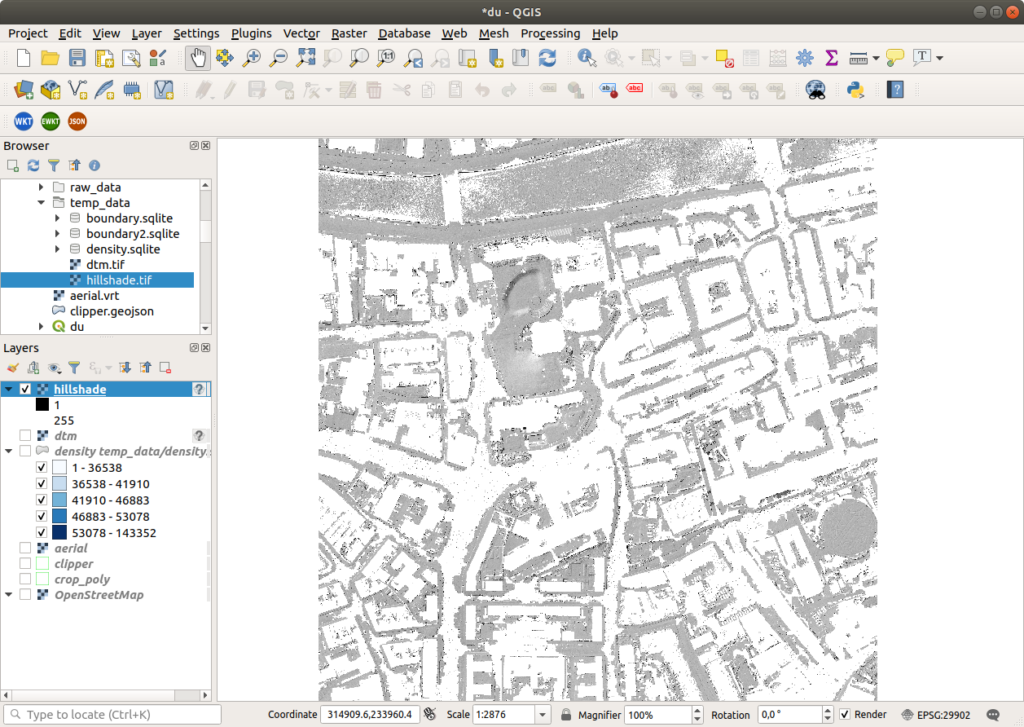

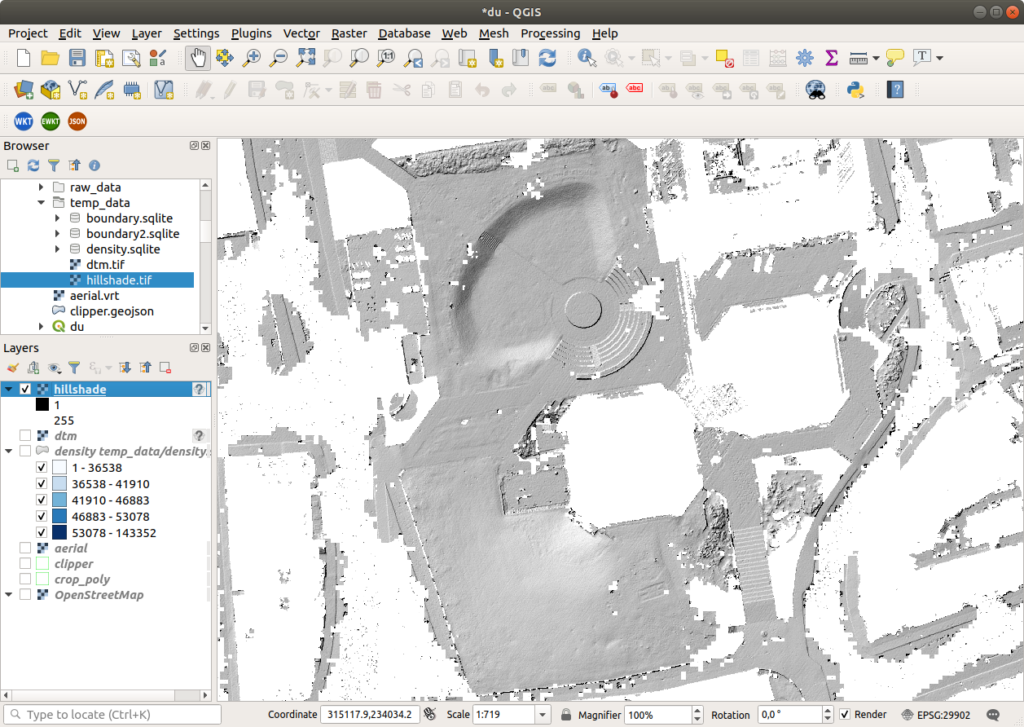

(new_clouds) myuser@comp:~/dublin$Generating a DTM (src)

Generate an elevation model surface using the output from above. Create a file named dtm.json and populate it with the code below, then run pdal pipeline. The GDAL writer creates a raster from a point cloud using an interpolation algorithm. (src)

Because this dataset is focused on buildings, prepare to find only small portions of ground.

{

"pipeline": [

"denoised_grnd_f.laz",

{

"filename":"temp_data/dtm.tif",

"gdaldriver":"GTiff",

"output_type":"all",

"resolution":"1",

"type": "writers.gdal"

}

]

}pdal pipeline dtm.json

du -h temp_data/dtm.tif(new_clouds) myuser@comp:~/dublin$ du -h temp_data/dtm.tif

6,8G temp_data/dtm.tif

(new_clouds) myuser@comp:~/dublin$Generate a Hillshade from the DTM (Digital Terrain Model).

gdaldem hillshade temp_data/dtm.tif \

temp_data/hillshade.tif \

-z 1.0 -s 1.0 -az 315.0 -alt 45.0 \

-of GTiff(new_clouds) myuser@comp:~/dublin$ gdaldem hillshade temp_data/dtm.tif \

> temp_data/hillshade.tif \

> -z 1.0 -s 1.0 -az 315.0 -alt 45.0 \

> -of GTiff

0...10...20...30...40...50...60...70...80...90...100 - done.

(new_clouds) myuser@comp:~/dublin$Creating surface meshes (src)

TODO

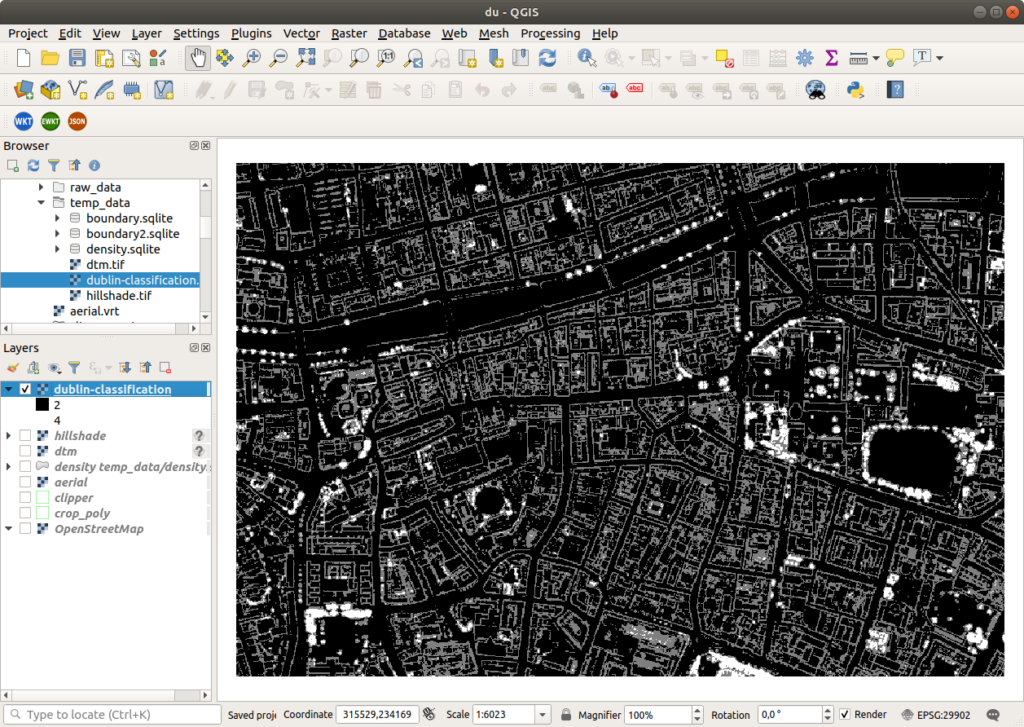

Rasterizing Attributes (src)

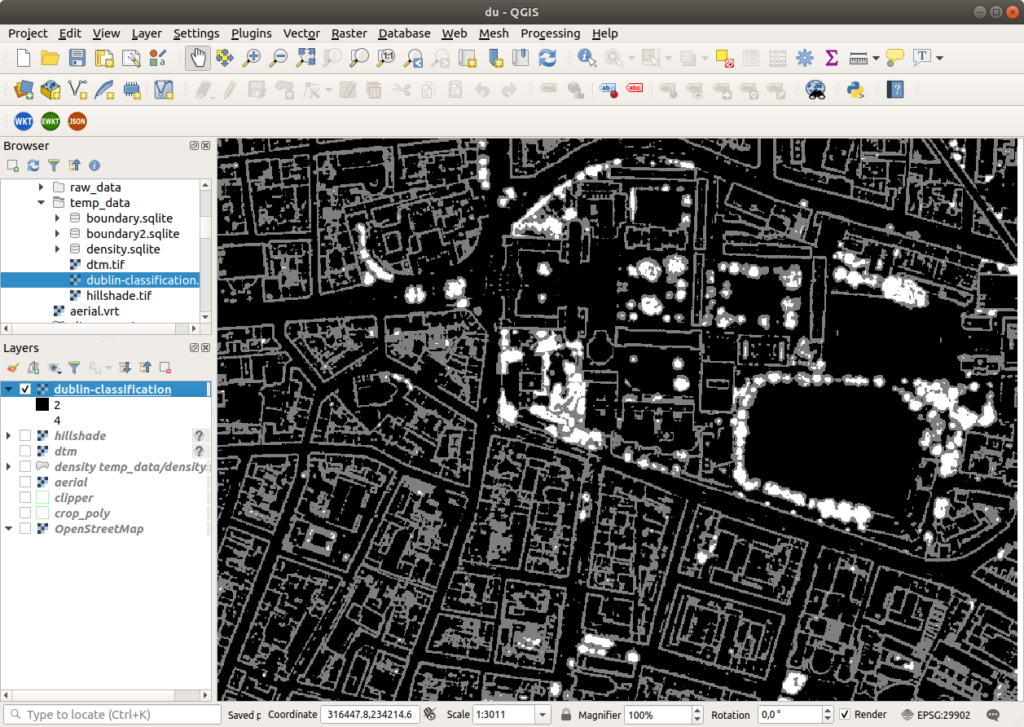

Generate a raster surface using a fully classified point cloud. Create a file named classification.json and populate it with the below code, then run pdal pipeline.

{

"pipeline":[

{

"type":"readers.las",

"filename":"temp_data/dublin-colored.laz"

},

{

"type":"writers.gdal",

"filename":"temp_data/dublin-classification.tif",

"dimension":"Classification",

"data_type":"uint16_t",

"output_type":"mean",

"resolution": 1

}

]

}pdal pipeline classification.json -v 3

du -h temp_data/dublin-classification.tif(new_clouds) myuser@comp:~/dublin$ pdal pipeline classification.json -v 3

(PDAL Debug) Debugging...

(pdal pipeline Debug) Executing pipeline in stream mode.

(new_clouds) myuser@comp:~/dublin$ du -h temp_data/dublin-classification.tif

2,9M temp_data/dublin-classification.tif

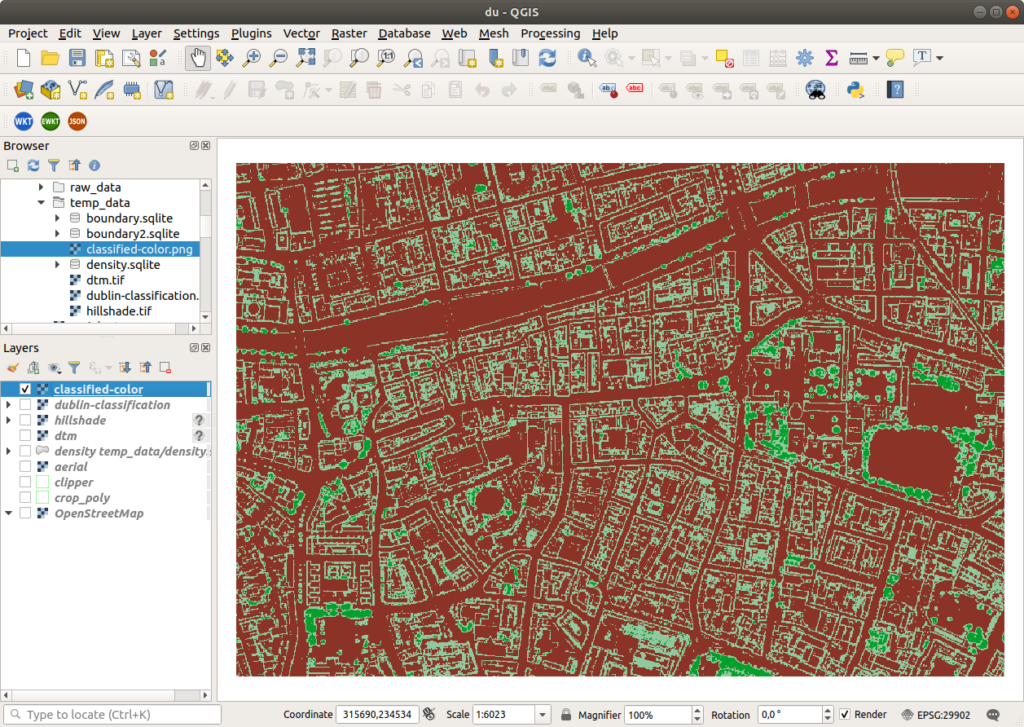

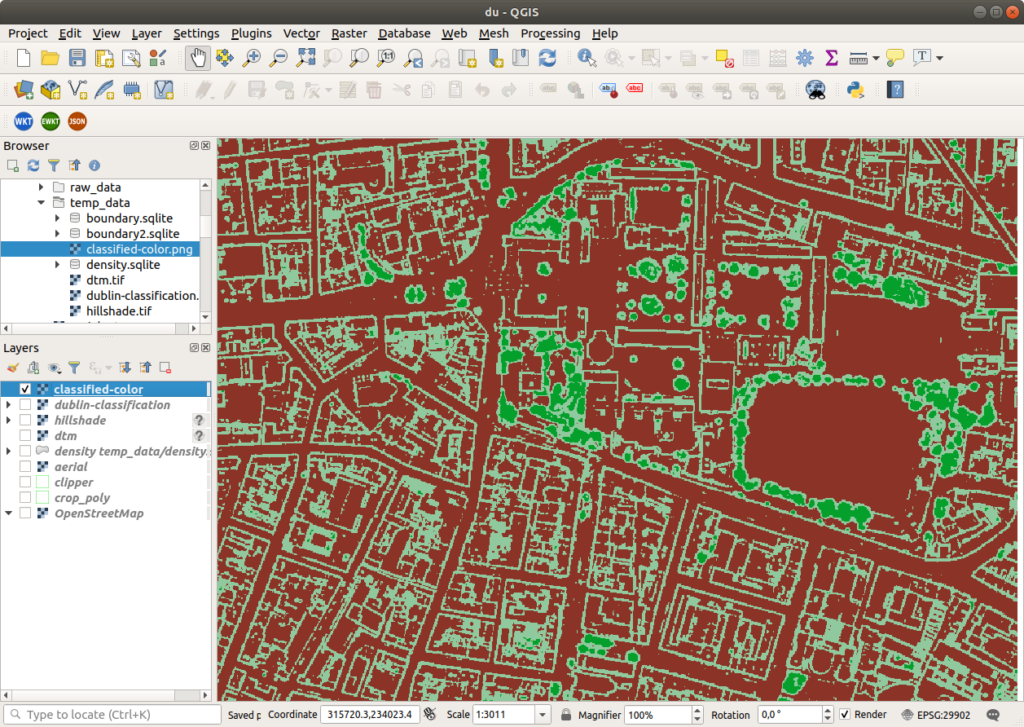

(new_clouds) myuser@comp:~/dublin$Then create a file named colours.txt and enter the code below, that gdaldem can consume to apply colors to the data, and QGIS can use as color map.

# QGIS Generated Color Map Export File

2 139 51 38 255 Ground

3 143 201 157 255 Low Veg

4 5 159 43 255 Med Veg

5 47 250 11 255 High Veg

6 209 151 25 255 Building

7 232 41 7 255 Low Point

8 197 0 204 255 reserved

9 26 44 240 255 Water

10 165 160 173 255 Rail

11 81 87 81 255 Road

12 203 210 73 255 Reserved

13 209 228 214 255 Wire - Guard (Shield)

14 160 168 231 255 Wire - Conductor (Phase)

15 220 213 164 255 Transmission Tower

16 214 211 143 255 Wire-Structure Connector (Insulator)

17 151 98 203 255 Bridge Deck

18 236 49 74 255 High Noise

19 185 103 45 255 Reserved

21 58 55 9 255 255 Reserved

22 76 46 58 255 255 Reserved

23 20 76 38 255 255 Reserved

26 78 92 32 255 255 Reservedgdaldem color-relief temp_data/dublin-classification.tif colours.txt temp_data/classified-color.png -of PNG(new_clouds) myuser@comp:~/dublin$ gdaldem color-relief temp_data/dublin-classification.tif colours.txt temp_data/classified-color.png -of PNG

0...10...20...30...40...50...60...70...80...90...100 - done.

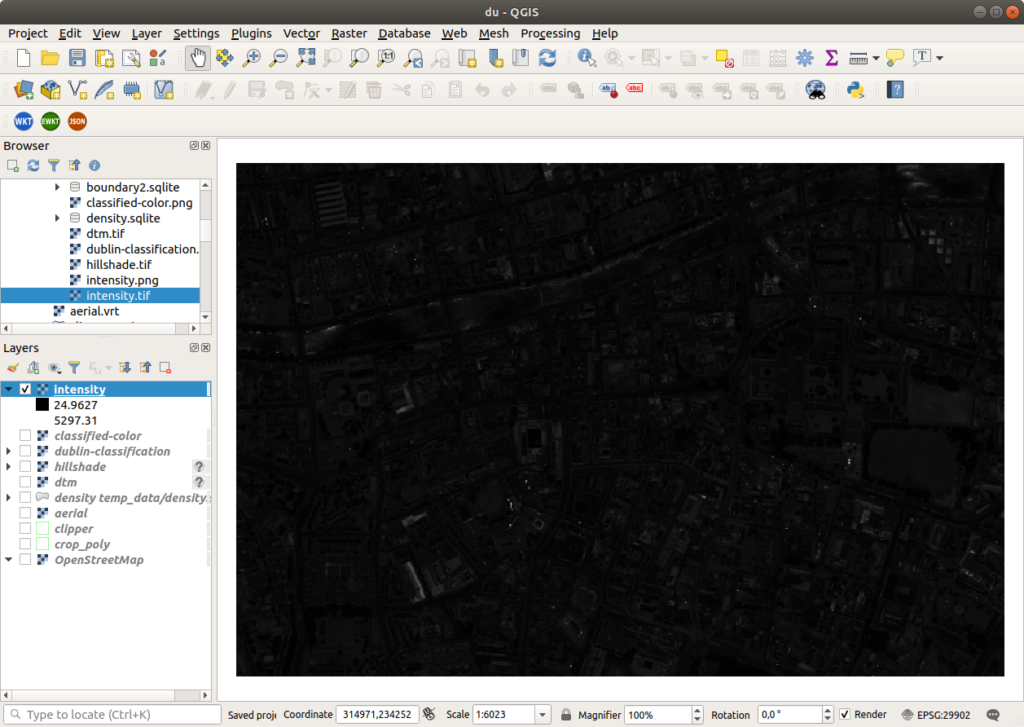

(new_clouds) myuser@comp:~/dublin$Then generate a relative intensity image.

pdal pipeline classification.json \

--writers.gdal.dimension="Intensity" \

--writers.gdal.data_type="float" \

--writers.gdal.filename="temp_data/intensity.tif" \

-v 3

gdal_translate temp_data/intensity.tif temp_data/intensity.png -of PNG(new_clouds) myuser@comp:~/dublin$ pdal pipeline classification.json \

> --writers.gdal.dimension="Intensity" \

> --writers.gdal.data_type="float" \

> --writers.gdal.filename="temp_data/intensity.tif" \

> -v 3

(PDAL Debug) Debugging...

(pdal pipeline Debug) Executing pipeline in stream mode.

(new_clouds) myuser@comp:~/dublin$ gdal_translate temp_data/intensity.tif temp_data/intensity.png -of PNG

Input file size is 1501, 1001

Warning 6: PNG driver doesn't support data type Float32. Only eight bit (Byte) and sixteen bit (UInt16) bands supported. Defaulting to Byte

0...10...20...30...40...50...60...70...80...90...100 - done.

(new_clouds) myuser@comp:~/dublin$Plotting a histogram using Python (src)

TODO: killed. The image above is from the Taal Volcano experiments.

{

"pipeline": [

{

"filename": "temp_data/dublin-colored.laz"

},

{

"type": "filters.python",

"function": "make_plot",

"module": "anything",

"pdalargs": "{\"filename\":\"temp_data/histogram.png\"}",

"script": "histogram.py"

},

{

"type": "writers.null"

}

]

}# import numpy

import numpy as np

# import matplotlib stuff and make sure to use the

# AGG renderer.

import matplotlib

matplotlib.use('Agg')

import matplotlib.pyplot as plt

import matplotlib.mlab as mlab

# This only works for Python 3. Use

# StringIO for Python 2.

from io import BytesIO

# The make_plot function will do all of our work. The

# filters.programmable filter expects a function name in the

# module that has at least two arguments -- "ins" which

# are numpy arrays for each dimension, and the "outs" which

# the script can alter/set/adjust to have them updated for

# further processing.

def make_plot(ins, outs):

# figure position and row will increment

figure_position = 1

row = 1

fig = plt.figure(figure_position, figsize=(6, 8.5), dpi=300)

for key in ins:

dimension = ins[key]

ax = fig.add_subplot(len(ins.keys()), 1, row)

# histogram the current dimension with 30 bins

n, bins, patches = ax.hist( dimension, 30,

facecolor='grey',

alpha=0.75,

align='mid',

histtype='stepfilled',

linewidth=None)

# Set plot particulars

ax.set_ylabel(key, size=10, rotation='horizontal')

ax.get_xaxis().set_visible(False)

ax.set_yticklabels('')

ax.set_yticks((),)

ax.set_xlim(min(dimension), max(dimension))

ax.set_ylim(min(n), max(n))

# increment plot position

row = row + 1

figure_position = figure_position + 1

# We will save the PNG bytes to a BytesIO instance

# and the nwrite that to a file.

output = BytesIO()

plt.savefig(output,format="PNG")

# a module global variable, called 'pdalargs' is available

# to filters.programmable and filters.predicate modules that contains

# a dictionary of arguments that can be explicitly passed into

# the module by the user. We passed in a filename arg in our `pdal pipeline` call

if 'filename' in pdalargs:

filename = pdalargs['filename']

else:

filename = 'histogram.png'

# open up the filename and write out the

# bytes of the PNG stored in the BytesIO instance

o = open(filename, 'wb')

o.write(output.getvalue())

o.close()

# filters.programmable scripts need to

# return True to tell the filter it was successful.

return Truepython -m pip install -U matplotlib==3.2.0rc1

pdal pipeline histogram.json(new_clouds) myuser@comp:~/dublin$ pdal pipeline histogram.json

anything:46: UserWarning: Attempting to set identical left == right == 0 results in singular transformations; automatically expanding.

(new_clouds) myuser@comp:~/dublin$Batch Processing (src)

Example syntax. There is another example in Identifying ground section.

# Create directory unless exists

mkdir -pv gtiles

# List files in Windows dos and iterate them

# use verbose to view logs

for %f in (*.*) do pdal pipeline denoise_ground.json ^

--readers.las.filename=%f ^

--writers.las.filename=../gtiles/%f

--verbose 4

# Create directory unless exists

mkdir -pv denoised_grnd

# List files in Linux shell and iterate them

ls laz_tiles/*.laz | \

parallel -I{} pdal pipeline denoise_ground.json \

--readers.las.filename={} \

--writers.las.filename=denoised_grnd/denoised_grnd{/.}.laz \

--verbose 4✅ Repeated the steps for Yosemite Valley

✅ Repeated the steps for an urban area

What’s next?

☑️ Try LasTools, too

☑️ The 3D in Blender. 😀

☑️ Manual classification and roof extraction. 🤩

Reminder: The colored point cloud data that we have used throughout this post can be viewed at https://potree.entwine.io/data/view.html?r=”https://maptheclouds.com/ept_dublin_colored/”.

Below, I leave you a Potree mix of this piece of Dublin.